Hive基本操作

建表语法

CREATE [EXTERNAL] TABLE [IF NOT EXISTS] table_name

[(col_name data_type [COMMENT col_comment], …)]

[COMMENT table_comment]

[PARTITIONED BY (col_name data_type [COMMENT col_comment], …)]

[CLUSTERED BY (col_name, col_name, …)

[SORTED BY (col_name [ASC|DESC], …)] INTO num_buckets BUCKETS]

[ROW FORMAT row_format]

[STORED AS file_format]

[LOCATION hdfs_path]

说明:

- CREATE TABLE 创建一个指定名字的表。如果相同名字的表已经存在,则抛出异常;用户可以用 IF NOT EXISTS 选项来忽略这个异常。

- EXTERNAL关键字可以让用户创建一个外部表,在建表的同时指定一个指向实际数据的路径(LOCATION),Hive 创建内部表时,会将数据移动到数据仓库指向的路径;若创建外部表,仅记录数据所在的路径,不对数据的位置做任何改变。在删除表的时候,内部表的元数据和数据会被一起删除,而外部表只删除元数据,不删除数据。

- LIKE 允许用户复制现有的表结构,但是不复制数据。

-

ROW FORMAT

DELIMITED

[FIELDS TERMINATED BY char]

[COLLECTION ITEMS TERMINATED BY char]

[MAP KEYS TERMINATED BY char]

[LINES TERMINATED BY char]

| SERDE serde_name [WITH SERDEPROPERTIES (property_name=property_value, property_name=property_value, …)]

用户在建表的时候可以自定义 SerDe 或者使用自带的 SerDe。如果没有指定 ROW FORMAT 或者 ROW FORMAT DELIMITED,将会使用自带的 SerDe。在建表的时候,用户还需要为表指定列,用户在指定表的列的同时也会指定自定义的 SerDe,Hive通过 SerDe 确定表的具体的列的数据。

5 STORED AS SEQUENCEFILE | TEXTFILE | RCFILE

如果文件数据是纯文本,可以使用 STORED AS TEXTFILE。如果数据需要压缩,使用 STORED AS SEQUENCEFILE。

6、CLUSTERED BY

对于每一个表(table)或者分区, Hive可以进一步组织成桶,也就是说桶是更为细粒度的数据范围划分。Hive也是 针对某一列进行桶的组织。Hive采用对列值哈希,然后除以桶的个数求余的方式决定该条记录存放在哪个桶当中。 把表(或者分区)组织成桶(Bucket)有两个理由:

(1)获得更高的查询处理效率。桶为表加上了额外的结构,Hive 在处理有些查询时能利用这个结构。具体而言,连接两个在(包含连接列的)相同列上划分了桶的表,可以使用 Map 端连接 (Map-side join)高效的实现。比如JOIN操作。对于JOIN操作两个表有一个相同的列,如果对这两个表都进行了桶操作。那么将保存相同列值的桶进行JOIN操作就可以,可以大大较少JOIN的数据量。

(2)使取样(sampling)更高效。在处理大规模数据集时,在开发和修改查询的阶段,如果能在数据集的一小部分数据上试运行查询,会带来很多方便。

2练习

创建外部表

hive> create external table td_ext(id int, name string) row format delimited fields terminated by ‘,’ location ‘/tt_t1’;

OK

Time taken: 1.605 seconds

hive> desc td_ext;

OK

id int

name string

Time taken: 0.508 seconds, Fetched: 2 row(s)

创建分区表

hive> create table td_ext2(id int, name string) partitioned by(part string) row format delimited fields terminated by ‘,’stored as textfile;

OK

Time taken: 0.195 seconds

hive> desc td_ext2;

OK

id int

name string

part string# Partition Information

# col_name data_type commentpart string

Time taken: 0.066 seconds, Fetched: 8 row(s)加载数据

hive> load data local inpath ‘/root/da.txt’ into table td_ext2 partition(part=”china”);

Loading data to table default.td_ext2 partition (part=china)

OK

Time taken: 1.163 seconds

hive> select * from td_ext2;

OK

1 t1 china

2 t2 china

3 t3 china

Time taken: 0.219 seconds, Fetched: 3 row(s)

删除表

hive> show tables;

OK

t_t2

td_ext

td_ext2

td_ext3

Time taken: 0.034 seconds, Fetched: 4 row(s)

hive> drop table t_t2;

OK

Time taken: 1.881 seconds

hive> show tables;

OK

td_ext

td_ext2

td_ext3

Time taken: 0.037 seconds, Fetched: 3 row(s)

hive>

Load

语法

LOAD DATA [LOCAL] INPATH ‘filepath’ [OVERWRITE] INTO

TABLE tablename [PARTITION (partcol1=val1, partcol2=val2 …)]

说明:

- Load 操作只是单纯的复制/移动操作,将数据文件移动到 Hive 表对应的位置。

- filepath:

相对路径,例如:project/data1

绝对路径,例如:/user/hive/project/data1

包含模式的完整 URI,列如:hdfs://namenode:9000/user/hive/project/data1

LOCAL关键字

如果指定了 LOCAL, load 命令会去查找本地文件系统中的 filepath。

如果没有指定 LOCAL 关键字,则根据inpath中的uri查找文件

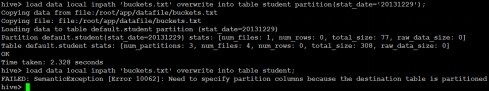

OVERWRITE 关键字

如果使用了 OVERWRITE 关键字,则目标表(或者分区)中的内容会被删除,然后再将 filepath 指向的文件/目录中的内容添加到表/分区中。

如果目标表(分区)已经有一个文件,并且文件名和 filepath 中的文件名冲突,那么现有的文件会被新文件所替代。

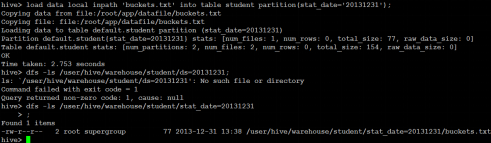

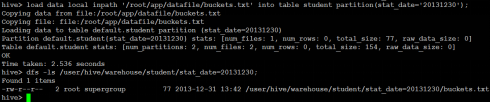

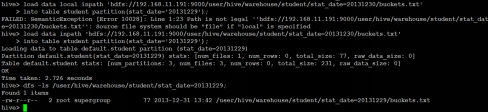

测试

hive> load data local inpath ‘/root/da.txt’ into table td_ext;

Loading data to table default.td_ext

OK

Time taken: 1.46 seconds

hive> select * from td_ext;

OK

1 t1

2 t2

3 t3

Time taken: 2.167 seconds, Fetched: 3 row(s)

加载相对路径数据。

加载绝对路径数据。

加载包含模式数据。

OVERWRITE关键字使用。

创建带桶的表

hive> create table td_ext5(id int, name string) clustered by(id) sorted by (name) into 2 buckets row format delimited fields terminated by ‘,’;

OK

Time taken: 0.469 second

插入数据

1,t1

2,t2

3,t3

4,t4

5,t5

6,t6

7,t7

Time taken: 0.135 seconds, Fetched: 2 row(s)

hive> load data local inpath ‘/root/da.txt’ into table td_ext5 ;

Loading data to table default.td_ext5

OK

Time taken: 0.461 seconds

解决方法

#设置变量,设置分桶为true, 设置reduce数量是分桶的数量个数

hive> set hive.enforce.bucketing=true;

hive> set mapreduce.job.reduces=4;

创建表td_ext6

create table td_ext6(id int, name string) clustered by(id) sorted by (name) into 2 buckets row format delimited fields terminated by ‘,’;

插入

insert into table td_ext6

select id,name from td_ext5 distribute by(id) sort by(id asc);hive> insert overwrite table td_ext6 > select * from td_ext5 cluster by(id) sort by(name); FAILED: SemanticException 2:45 Cannot have both CLUSTER BY and SORT BY clauses. Error encountered near token 'name' hive> insert overwrite table td_ext6 > select * from td_ext5 cluster by(id) sort by(id); FAILED: SemanticException 2:45 Cannot have both CLUSTER BY and SORT BY clauses. Error encountered near token 'id' hive> insert into table td_ext6 > select id,name from td_ext5 distribute by(id) sort by(id asc); WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. tez, spark) or using Hive 1.X releases Query ID = root_20190309024031_9884882d-f4fd-4e86-b31d-a8cc7ed18b60 Total jobs = 1 Launching Job 1 out of 1 Number of reduce tasks determined at compile time: 2 In order to change the average load for a reducer (in bytes): set hive.exec.reducers.bytes.per.reducer=<number> In order to limit the maximum number of reducers: set hive.exec.reducers.max=<number> In order to set a constant number of reducers: set mapreduce.job.reduces=<number> Starting Job = job_1552027080460_0002, Tracking URL = http://HAT02:8088/proxy/application_1552027080460_0002/ Kill Command = /home/hadoop/app/hadoop-2.6.4/bin/hadoop job -kill job_1552027080460_0002 Hadoop job information for Stage-1: number of mappers: 1; number of reducers: 2 2019-03-09 02:41:44,623 Stage-1 map = 0%, reduce = 0% 2019-03-09 02:42:34,243 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 1.14 sec 2019-03-09 02:43:08,802 Stage-1 map = 100%, reduce = 50%, Cumulative CPU 2.7 sec 2019-03-09 02:43:09,838 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 4.33 sec MapReduce Total cumulative CPU time: 4 seconds 330 msec Ended Job = job_1552027080460_0002 Loading data to table default.td_ext6 MapReduce Jobs Launched: Stage-Stage-1: Map: 1 Reduce: 2 Cumulative CPU: 4.33 sec HDFS Read: 10758 HDFS Write: 177 SUCCESS Total MapReduce CPU Time Spent: 4 seconds 330 msec OK Time taken: 159.52 seconds

增加/删除分区

ALTER TABLE table_name ADD [IF NOT EXISTS] partition_spec [ LOCATION ‘location1’ ] partition_spec [ LOCATION ‘location2’ ] …

partition_spec:

: PARTITION (partition_col = partition_col_value, partition_col = partiton_col_value, …)

ALTER TABLE table_name DROP partition_spec, partition_spec,…

案例

hive> alter table td_ext2 add partition(part=”US”);

OK

Time taken: 0.283 seconds

hive> select * from td_ext2;

hive> alter table td_ext2 drop partition(part=”US”);

Dropped the partition part=US

OK

Time taken: 0.715 seconds

重命名表

- 语法结构

ALTER TABLE table_name RENAME TO new_table_name

增加/更新列

ALTER TABLE table_name ADD|REPLACE COLUMNS (col_name data_type [COMMENT col_comment], …)

注:ADD是代表新增一字段,字段位置在所有列后面(partition列前),REPLACE则是表示替换表中所有字段。

显示命令

show tables

show databases

show partitions

show functions

desc extended t_name;

desc formatted table_name;

Insert

语法结构

INSERT OVERWRITE TABLE tablename1 [PARTITION (partcol1=val1, partcol2=val2 …)] select_statement1 FROM from_statement

Multiple inserts:

FROM from_statement

INSERT OVERWRITE TABLE tablename1 [PARTITION (partcol1=val1, partcol2=val2 …)] select_statement1

[INSERT OVERWRITE TABLE tablename2 [PARTITION …] select_statement2] …

Dynamic partition inserts:

INSERT OVERWRITE TABLE tablename PARTITION (partcol1[=val1], partcol2[=val2] …) select_statement FROM from_statement

- 具体实例

基本模式插入。

多插入模式

自动分区模式。

导出表数据

语法结构

INSERT OVERWRITE [LOCAL] DIRECTORY directory1 SELECT … FROM …

multiple inserts:

FROM from_statement

INSERT OVERWRITE [LOCAL] DIRECTORY directory1 select_statement1

[INSERT OVERWRITE [LOCAL] DIRECTORY directory2 select_statement2] …

导出文件到本地

说明:

数据写入到文件系统时进行文本序列化,且每列用^A来区分,\n为换行符。用more命令查看时不容易看出分割符,可以使用:

sed -e ‘s/\x01/|/g’

filename

来查看。

导出数据到HDFS

SELECT

Select操作

语法结构

SELECT [ALL | DISTINCT] select_expr, select_expr, …

FROM table_reference

[WHERE where_condition]

[GROUP BY col_list [HAVING condition]]

[CLUSTER BY col_list

| [DISTRIBUTE BY col_list] [SORT BY| ORDER BY col_list]

]

[LIMIT number]

注:1、order by 会对输入做全局排序,因此只有一个reducer,会导致当输入规模较大时,需要较长的计算时间。

2、sort by不是全局排序,其在数据进入reducer前完成排序。因此,如果用sort by进行排序,并且设置mapred.reduce.tasks>1,则sort by只保证每个reducer的输出有序,不保证全局有序。

3、distribute by根据distribute by指定的内容将数据分到同一个reducer。

4、Cluster by 除了具有Distribute by的功能外,还会对该字段进行排序。因此,常常认为cluster by = distribute by + sort by

Hive Join

语法

join_table:

table_reference JOIN table_factor [join_condition]

| table_reference {LEFT|RIGHT|FULL} [OUTER] JOIN table_reference join_condition

| table_reference LEFT SEMI JOIN table_reference join_condition

Hive 支持等值连接(equality joins)、外连接(outer joins)和(left/right joins)。Hive

不支持非等值的连接

,因为非等值连接非常难转化到 map/reduce 任务。另外,Hive 支持多于 2 个表的连接。

写 join 查询时,需要注意几个关键点

1.

只支持等值join

例如:

SELECT a.* FROM a JOIN b ON (a.id = b.id)

SELECT a.* FROM a JOIN b

ON (a.id = b.id AND a.department = b.department)

是正确的,然而:

SELECT a.* FROM a JOIN b ON (a.id>b.id)

是错误的。

2.

可以 join 多于 2 个表。

例如

SELECT a.val, b.val, c.val FROM a JOIN b

ON (a.key = b.key1) JOIN c ON (c.key = b.key2)

如果join中多个表的 join key 是同一个,则 join 会被转化为单个 map/reduce 任务,例如:

SELECT a.val, b.val, c.val FROM a JOIN b

ON (a.key = b.key1) JOIN c

ON (c.key = b.key1)

被转化为单个 map/reduce 任务,因为 join 中只使用了 b.key1 作为 join key。

SELECT a.val, b.val, c.val FROM a JOIN b ON (a.key = b.key1)

JOIN c ON (c.key = b.key2)

而这一 join 被转化为 2 个 map/reduce 任务。因为 b.key1 用于第一次 join 条件,而 b.key2 用于第二次 join。

3

.join 时,每次 map/reduce 任务的逻辑:

reducer 会缓存 join 序列中除了最后一个表的所有表的记录,再通过最后一个表将结果序列化到文件系统。这一实现有助于在 reduce 端减少内存的使用量。实践中,应该把最大的那个表写在最后(否则会因为缓存浪费大量内存)。例如:

SELECT a.val, b.val, c.val FROM a

JOIN b ON (a.key = b.key1) JOIN c ON (c.key = b.key1)

所有表都使用同一个 join key(使用 1 次 map/reduce 任务计算)。Reduce 端会缓存 a 表和 b 表的记录,然后每次取得一个 c 表的记录就计算一次 join 结果,类似的还有:

SELECT a.val, b.val, c.val FROM a

JOIN b ON (a.key = b.key1) JOIN c ON (c.key = b.key2)

这里用了 2 次 map/reduce 任务。第一次缓存 a 表,用 b 表序列化;第二次缓存第一次 map/reduce 任务的结果,然后用 c 表序列化。

4.LEFT,RIGHT 和 FULL OUTER 关键字用于处理 join 中空记录的情况

例如:

SELECT a.val, b.val FROM

a LEFT OUTER JOIN b ON (a.key=b.key)

对应所有 a 表中的记录都有一条记录输出。输出的结果应该是 a.val, b.val,当 a.key=b.key 时,而当 b.key 中找不到等值的 a.key 记录时也会输出:

a.val, NULL

所以 a 表中的所有记录都被保留了;

“a RIGHT OUTER JOIN b”会保留所有 b 表的记录。

Join 发生在 WHERE 子句

之前

。如果你想限制 join 的输出,应该在 WHERE 子句中写过滤条件——或是在 join 子句中写。这里面一个容易混淆的问题是表分区的情况:SELECT a.val, b.val FROM a

LEFT OUTER JOIN b ON (a.key=b.key)

WHERE a.ds=’2009-07-07′ AND b.ds=’2009-07-07′

会 join a 表到 b 表(OUTER JOIN),列出 a.val 和 b.val 的记录。WHERE 从句中可以使用其他列作为过滤条件。但是,如前所述,如果 b 表中找不到对应 a 表的记录,b 表的所有列都会列出 NULL,

包括 ds 列

。也就是说,join 会过滤 b 表中不能找到匹配 a 表 join key 的所有记录。这样的话,LEFT OUTER 就使得查询结果与 WHERE 子句无关了。解决的办法是在 OUTER JOIN 时使用以下语法:SELECT a.val, b.val FROM a LEFT OUTER JOIN b

ON (a.key=b.key AND

b.ds=’2009-07-07′ AND

a.ds=’2009-07-07′)

这一查询的结果是预先在 join 阶段过滤过的,所以不会存在上述问题。这一逻辑也可以应用于 RIGHT 和 FULL 类型的 join 中。

Join 是不能交换位置的。

无论是 LEFT 还是 RIGHT join,都是左连接的。SELECT a.val1, a.val2, b.val, c.val

FROM a

JOIN b ON (a.key = b.key)

LEFT OUTER JOIN c ON (a.key = c.key)

先 join a 表到 b 表,丢弃掉所有 join key 中不匹配的记录,然后用这一中间结果和 c 表做 join。这一表述有一个不太明显的问题,就是当一个 key 在 a 表和 c 表都存在,但是 b 表中不存在的时候:整个记录在第一次 join,即 a JOIN b 的时候都被丢掉了(包括a.val1,a.val2和a.key),然后我们再和 c 表 join 的时候,如果 c.key 与 a.key 或 b.key 相等,就会得到这样的结果:NULL, NULL, NULL, c.val

测试数据

[root@HAT01 ~]# vi 1.txt

1,a

2,b

3,c

4,d

7,y

8,u[root@HAT01 ~]# vi 2.txt

2,bb

7,yy

9,pp

建表和加载数据

hive> create table ttt_t1(id int,name string) row format delimited fields terminated by ‘,’;

OK

Time taken: 2.59 seconds

hive> create table ttt_t2(id int,name string) row format delimited fields terminated by ‘,’;

OK

Time taken: 0.184 seconds

hive>

hive> load data local inpath ‘/root/1.txt’ into table ttt_t1;

Loading data to table default.ttt_t1

OK

Time taken: 1.801 seconds

hive> load data local inpath ‘/root/2.txt’ into table ttt_t2;

Loading data to table default.ttt_t2

OK

Time taken: 0.419 seconds

inner join

hive> select * from ttt_t1 a inner join ttt_t2 b on a.id=b.id;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. tez, spark) or using Hive 1.X releases.

Query ID = root_20190309044401_b46dfbb8-a065-40d5-8867-345385016bc0

Total jobs = 1

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/home/hadoop/app/hive/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/home/hadoop/app/hadoop-2.6.4/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

2019-03-09 04:44:23 Starting to launch local task to process map join; maximum memory = 518979584

2019-03-09 04:44:45 Dump the side-table for tag: 1 with group count: 3 into file: file:/tmp/root/e6a742cd-bf4d-448a-8e11-231bf6f8e66b/hive_2019-03-09_04-44-01_179_3861251583098106169-1/-local-10004/HashTable-Stage-3/MapJoin-mapfile01–.hashtable

2019-03-09 04:44:46 Uploaded 1 File to: file:/tmp/root/e6a742cd-bf4d-448a-8e11-231bf6f8e66b/hive_2019-03-09_04-44-01_179_3861251583098106169-1/-local-10004/HashTable-Stage-3/MapJoin-mapfile01–.hashtable (326 bytes)

2019-03-09 04:44:46 End of local task; Time Taken: 23.134 sec.

Execution completed successfully

MapredLocal task succeeded

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there’s no reduce operator

Starting Job = job_1552027080460_0003, Tracking URL = http://HAT02:8088/proxy/application_1552027080460_0003/

Kill Command = /home/hadoop/app/hadoop-2.6.4/bin/hadoop job -kill job_1552027080460_0003

Hadoop job information for Stage-3: number of mappers: 1; number of reducers: 0

2019-03-09 04:46:05,600 Stage-3 map = 0%, reduce = 0%

2019-03-09 04:46:55,216 Stage-3 map = 100%, reduce = 0%, Cumulative CPU 1.76 sec

MapReduce Total cumulative CPU time: 1 seconds 760 msec

Ended Job = job_1552027080460_0003

MapReduce Jobs Launched:

Stage-Stage-3: Map: 1 Cumulative CPU: 1.76 sec HDFS Read: 6009 HDFS Write: 129 SUCCESS

Total MapReduce CPU Time Spent: 1 seconds 760 msec

OK

2 b 2 bb

7 y 7 yy

Time taken: 175.256 seconds, Fetched: 2 row(s)

left join

hive> select * from ttt_t1 a left join ttt_t2 b on a.id=b.id;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. tez, spark) or using Hive 1.X releases.

Query ID = root_20190309045404_5c095268-16dc-4239-90db-dbe3a56e2e89

Total jobs = 1

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/home/hadoop/app/hive/lib/log4j-slf4j-impl-2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/home/hadoop/app/hadoop-2.6.4/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

2019-03-09 04:54:19 Starting to launch local task to process map join; maximum memory = 518979584

2019-03-09 04:54:41 Dump the side-table for tag: 1 with group count: 3 into file: file:/tmp/root/e6a742cd-bf4d-448a-8e11-231bf6f8e66b/hive_2019-03-09_04-54-04_504_5161753169631820783-1/-local-10004/HashTable-Stage-3/MapJoin-mapfile21–.hashtable

2019-03-09 04:54:41 Uploaded 1 File to: file:/tmp/root/e6a742cd-bf4d-448a-8e11-231bf6f8e66b/hive_2019-03-09_04-54-04_504_5161753169631820783-1/-local-10004/HashTable-Stage-3/MapJoin-mapfile21–.hashtable (326 bytes)

2019-03-09 04:54:41 End of local task; Time Taken: 22.603 sec.

Execution completed successfully

MapredLocal task succeeded

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there’s no reduce operator

Starting Job = job_1552027080460_0005, Tracking URL = http://HAT02:8088/proxy/application_1552027080460_0005/

Kill Command = /home/hadoop/app/hadoop-2.6.4/bin/hadoop job -kill job_1552027080460_0005

Hadoop job information for Stage-3: number of mappers: 1; number of reducers: 0

2019-03-09 04:55:56,647 Stage-3 map = 0%, reduce = 0%

2019-03-09 04:56:44,754 Stage-3 map = 100%, reduce = 0%, Cumulative CPU 1.18 sec

MapReduce Total cumulative CPU time: 1 seconds 180 msec

Ended Job = job_1552027080460_0005

MapReduce Jobs Launched:

Stage-Stage-3: Map: 1 Cumulative CPU: 1.18 sec HDFS Read: 5678 HDFS Write: 217 SUCCESS

Total MapReduce CPU Time Spent: 1 seconds 180 msec

OK

1 a NULL NULL

2 b 2 bb

3 c NULL NULL

4 d NULL NULL

7 y 7 yy

8 u NULL NULL

Time taken: 162.569 seconds, Fetched: 6 row(s)

hive>

接下来我就打印主要信息

right join

hive> select * from ttt_t1 a right join ttt_t2 b on a.id=b.id;

2 b 2 bb

7 y 7 yy

NULL NULL 9 pp