各个分类网络的结构(持续更新)

文章目录

PS: 以下内容只详细讲解ResNet网络,在其基础上,其余网络只展示基本结构

torchvision.datasets.数据集简介:

(1)MNIST:10个类,60000个训练数据,10000个测试数据,(batch_size, 1, 28, 28)

(2)CIFAR10:10个类,50000个训练数据,10000个测试数据,(batch_size, 3, 32, 32)

(3)CIFAR100:100个类,50000个训练数据,10000个测试数据,(batch_size, 3, 32, 32)

(20个超类,每个超类有5个小类,每张图两个标签)

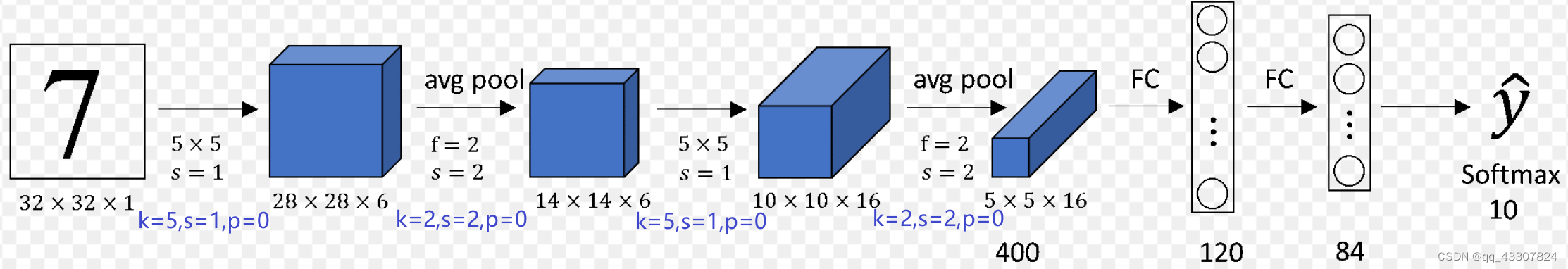

一、LeNet

二、AlexNet

(1)使用官方接口:torchvision.models.alexnet(pretrained=False, num_classes=1000, dropout=0.5)

(2)上述参数可以自己设置

(如num_classes=10)

,输入图片的尺寸也可以是任意的,但每层输出的通道数是相同的,图片大小会相应成倍变化

(3)官方源码的每层通道数或padding与下图略有不同,可以自己按需要进行修改

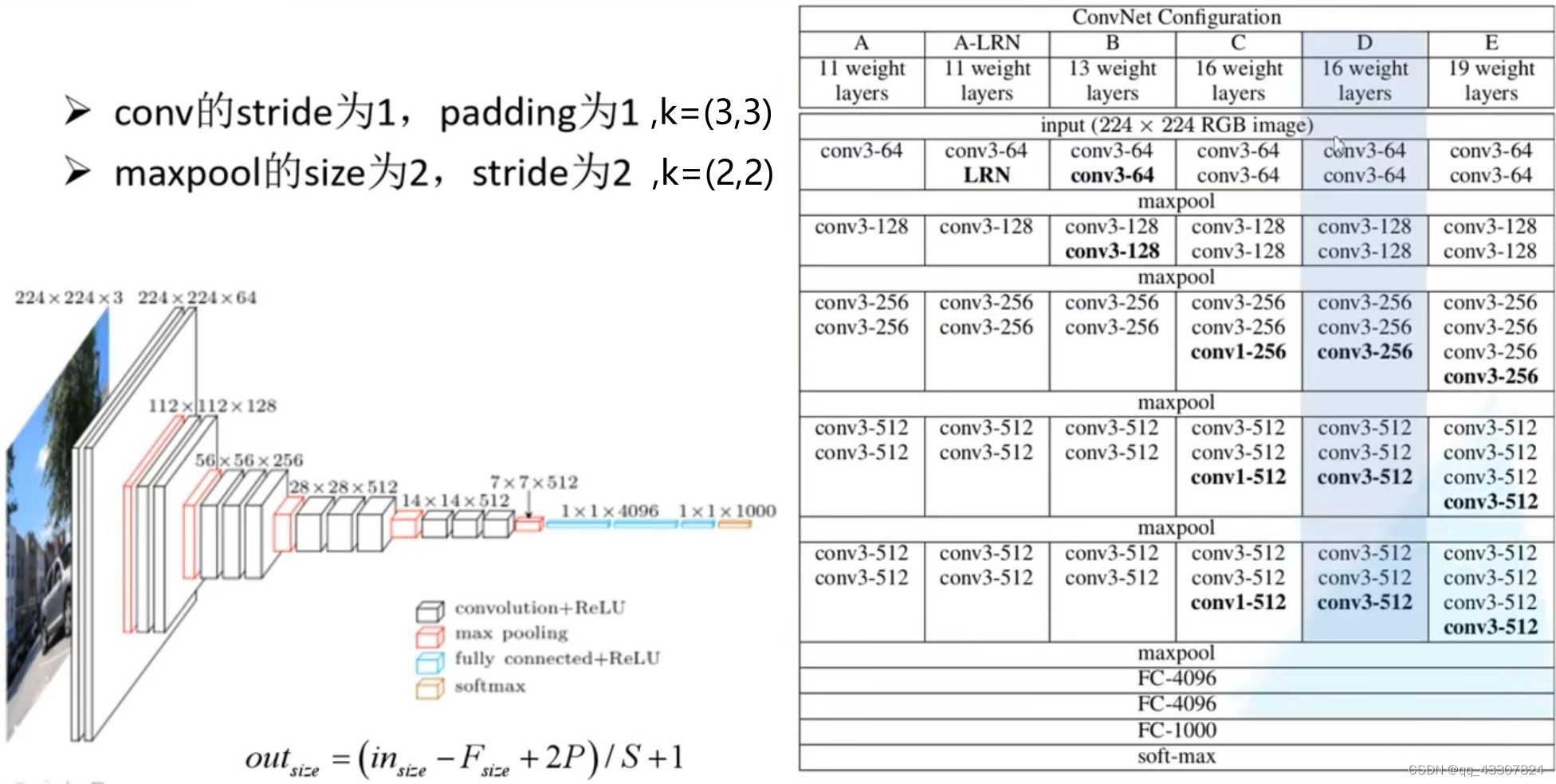

三、VGG

用法与上面AlexNet一致,且官方源码结构和下图完全一致。

四、ResNet详解

-

参考1

,

参考2

-

直接调用官方torchvision.models.resnet18()等函数

,通过下面第6点pytorch官方源码分析可知,

(1)可自定义的位置参数有

:pretrained=False, progress=True, num_classes=1000,

(2)输入图片的尺寸

可以是任意的,但每层输出的通道数和输入图片(3,224,224)时相同,图片大小相应成倍变化。 -

以输入图片(3,224,224)为例

分析每层的输出

→64,k

=

7

,

s

=

2

,

p

=

3

c

o

n

v

1

\xrightarrow[{

{\text{64,k}} = 7,s = 2,p = 3}]{

{conv1}}

co

n

v

1

64,k

=

7

,

s

=

2

,

p

=

3

(64,112,112)

→64,k

=

3

,

s

=

2

,

p

=

1

M

a

x

P

o

o

l

\xrightarrow[{

{\text{64,k}} = 3,s = 2,p = 1}]{

{MaxPool}}

M

a

x

P

oo

l

64,k

=

3

,

s

=

2

,

p

=

1

(64,56,56)

→∗

4

B

a

s

i

c

B

l

o

c

k

(

R

e

s

N

e

t

18

,

34

)

/

B

o

t

t

l

e

n

e

c

k

(

R

e

s

N

e

t

50

,

101

,

152

)

\xrightarrow[*{

{\text{ 4}}}]{

{BasicBlock(ResNet18,34)/Bottleneck(ResNet50,101,152)}}

B

a

s

i

c

Bl

oc

k

(

R

es

N

e

t

18

,

34

)

/

B

o

ttl

e

n

ec

k

(

R

es

N

e

t

50

,

101

,

152

)

∗

4

(512/2048,7,7)

→outputsize=

(

1

,

1

)

A

d

a

p

t

i

v

e

A

v

g

P

o

o

l

2

d

\xrightarrow[{

{\text{outputsize=}(1,1)}}]{

{AdaptiveAvgPool2d}}

A

d

a

pt

i

v

e

A

vg

P

oo

l

2

d

outputsize=

(

1

,

1

)

(512/2048,1,1)

→1000

F

C

,

s

o

f

t

m

a

x

\xrightarrow[{

{\text{1000}}}]{

{FC,softmax}}

FC

,

so

f

t

ma

x

1000

输出1000个类别的概率 -

两种残差结构

:左边残差结构BasicBlock()

(用于ResNet18,34网络)

;右边残差结构Bottleneck

(ResNet50,101,152网络)

-

不同层数的ResNet网络结构及详细说明

(下面第二张图虚线表示通道数发生了变化,用1*1的卷积调整通道数,步长为2调整尺寸大小)

:

-

pytorch官方源码分析

源码

,

使用案例:net=torchvision.models.resnet18(num_classes=10)的分析:

(1)调用resnet18函数

:

传递

默认参数

pretrained=False, progress=True和**kwargs关键字参数

(与下面调用的函数或类有关)

给_resnet函数

。

(2)调用_resnet函数

:

传递

位置参数block

(BasicBlock)

,layers

([2,2,2,2])

,**kwargs

给ResNet类

(将ResNet类初始化为实例对象,由BasicBlock和[2,2,2,2]可知是构建resnet18模型)

,

传递

位置参数arch

(‘resnet18’)

,pretrained,progress

用来

选择是否加载预训练参数

(arch表示加载哪个resnet网络的预训练参数,当pretrained=True时,progress=True表示显示加载进度)

。

(3)ResNet类

:有

默认参数

num_classes=1000;

传递

位置参数block

(BasicBlock)

,layers

([2,2,2,2])

,

给_make_layer

(传进的参数BasicBlock即:选择类名BasicBlock(ResNet18,34)还是Bottleneck(ResNet50,101,152)来实例化搭建残差结构,重复[2,2,2,2]次)

,

(4)BasicBlock和Bottleneck类

:用来构建两种残存结构#代码不全,仅用于上述torchvision.models.resnet18()分析 def resnet18(pretrained=False, progress=True, **kwargs): return _resnet('resnet18', BasicBlock, [2, 2, 2, 2], pretrained, progress, **kwargs) def _resnet(arch, block, layers, pretrained, progress, **kwargs): model = ResNet(block, layers, **kwargs) if pretrained: state_dict = load_state_dict_from_url(model_urls[arch],progress=progress) model.load_state_dict(state_dict) return model class ResNet(nn.Module): def __init__(self, block: Type[Union[BasicBlock, Bottleneck]], layers: List[int], num_classes: int = 1000, ··· self.conv1 = nn.Conv2d(3, self.inplanes, kernel_size=7, stride=2, padding=3, bias=False) self.bn1 = norm_layer(self.inplanes) self.relu = nn.ReLU(inplace=True) self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1) """block,layers传到_make_layer里。block选择类名BasicBlock(ResNet18,34) 还是Bottleneck(ResNet50,101,152)来实例化搭建残差结构,重复layers[2,2,2,2]次。 expansion在这两个残差结构里面定义,控制最后卷积通道数是512还是2048""" self.layer1 = self._make_layer(block, 64, layers[0]) self.layer2 = self._make_layer(block, 128, layers[1], stride=2) self.layer3 = self._make_layer(block, 256, layers[2], stride=2) self.layer4 = self._make_layer(block, 512, layers[3], stride=2) self.avgpool = nn.AdaptiveAvgPool2d((1, 1)) self.fc = nn.Linear(512 * block.expansion, num_classes) def _make_layer(self,block,planes,blocks,stride,dilate) -> nn.Sequential: layers = [] ··· layers.append(block(···)) for _ in range(1, blocks): layers.append(block(···)) return nn.Sequential(*layers) def _forward_impl(self, x: Tensor) -> Tensor: """ 该部分即所有ResNet网络的主干:卷积,最大池化,4次残差结构,自适应平均池化,全连接及softmax """ conv1(x),bn1(x),relu(x) maxpool(x) layer1(x),layer2(x),layer3(x),layer4(x) avgpool(x) torch.flatten(x, 1),self.fc(x) return x def forward(self, x: Tensor) -> Tensor: return self._forward_impl(x) class BasicBlock(nn.Module):#第一种残差结构BasicBlock(),用于ResNet18,34,两个3x3的卷积+shortcut expansion: int = 1 def forward(self, x: Tensor) -> Tensor: identity = x conv1(x),bn1(out),relu(out) conv2(out),bn2(out) out += identity out = self.relu(out) return out class Bottleneck(nn.Module):#第二种残差结构Bottleneck,用于ResNet50,101,152,3x3及1x1及3x3的卷积+shortcut expansion: int = 4 def forward(self, x: Tensor) -> Tensor: identity = x conv1(x),bn1(out),relu(out) conv2(x),bn2(out),relu(out) conv3(out),bn3(out) out += identity out = self.relu(out) return out