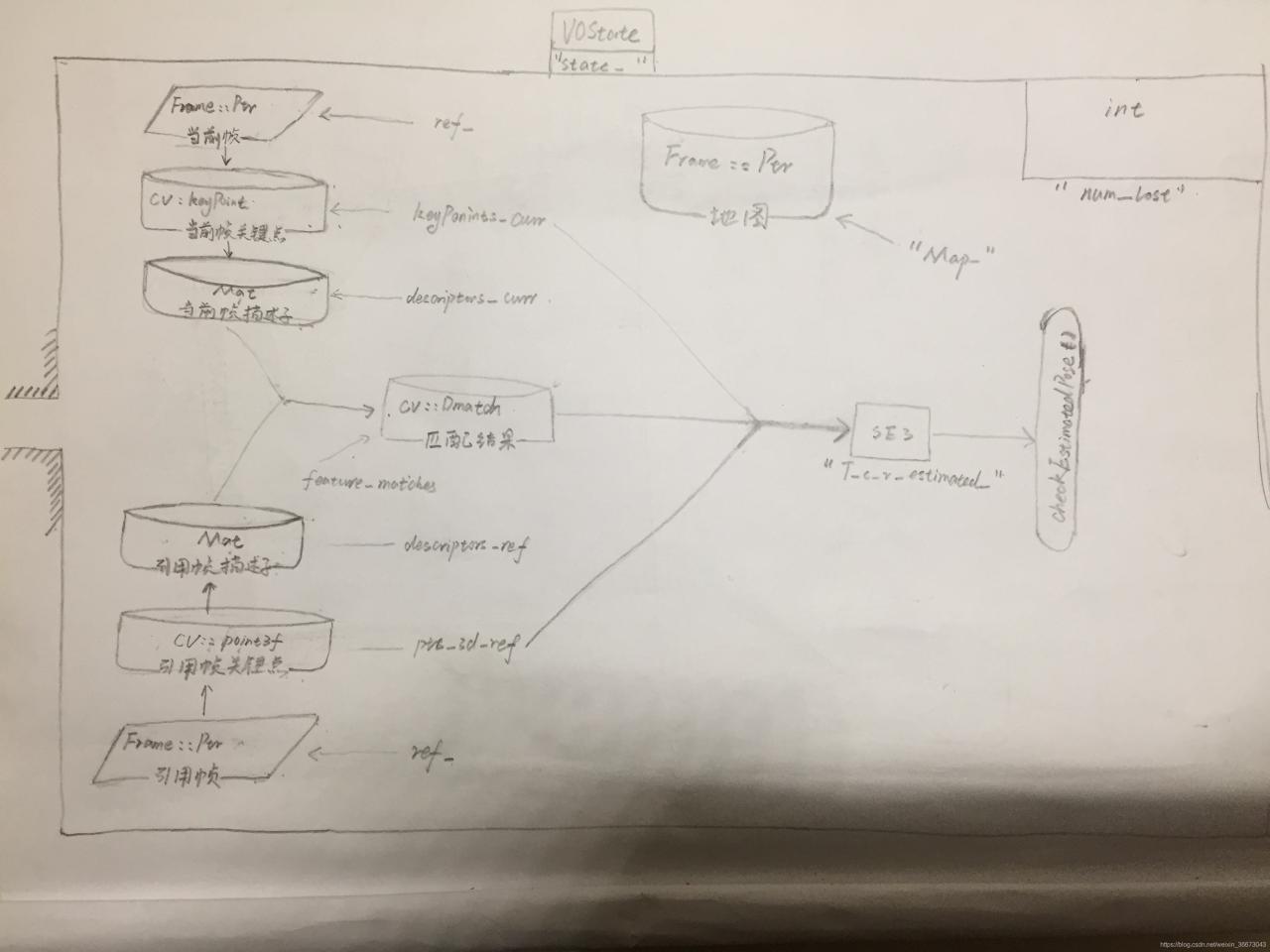

逻辑框架——要素图

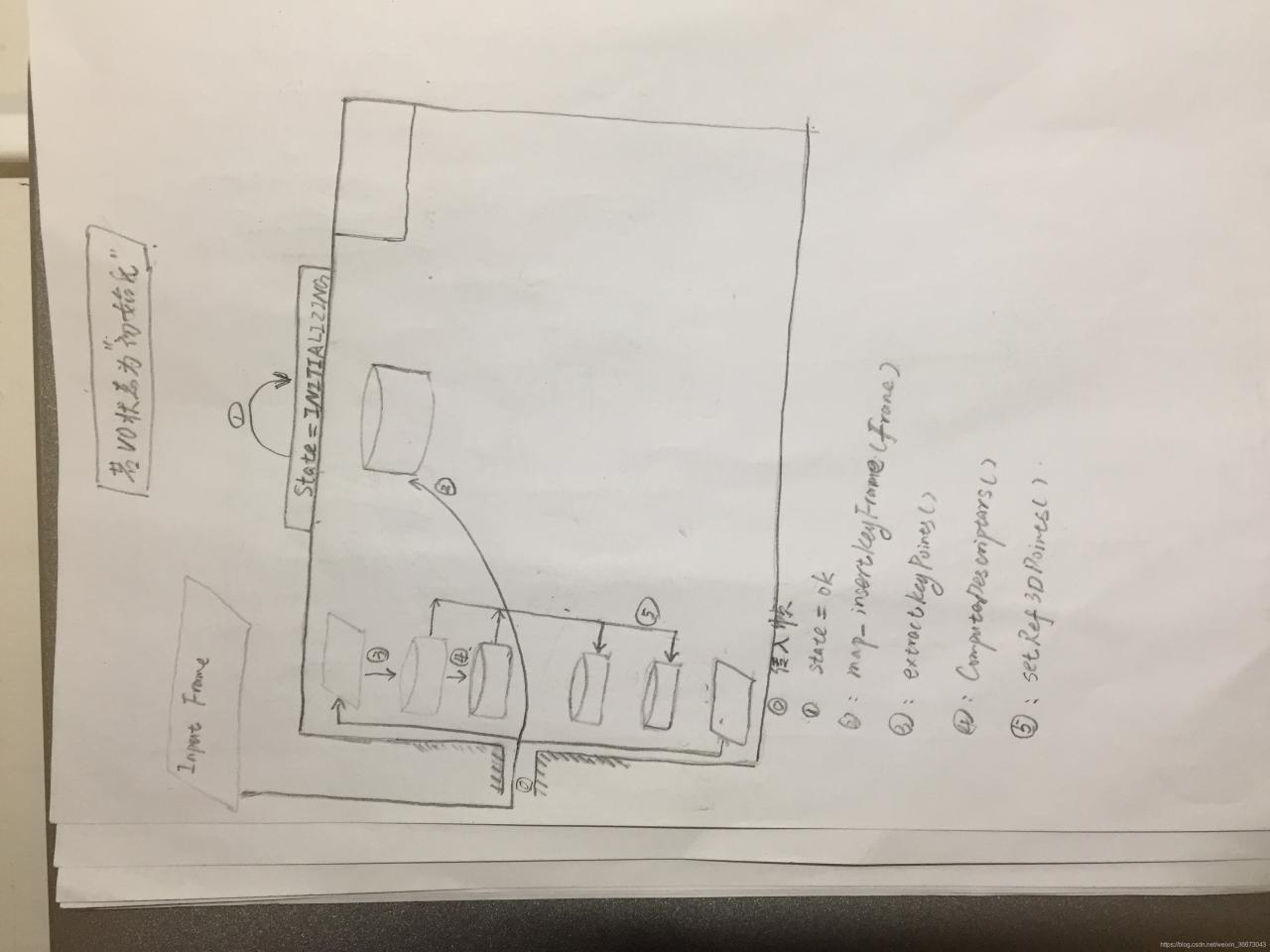

逻辑框架——流程图——>初始化状态

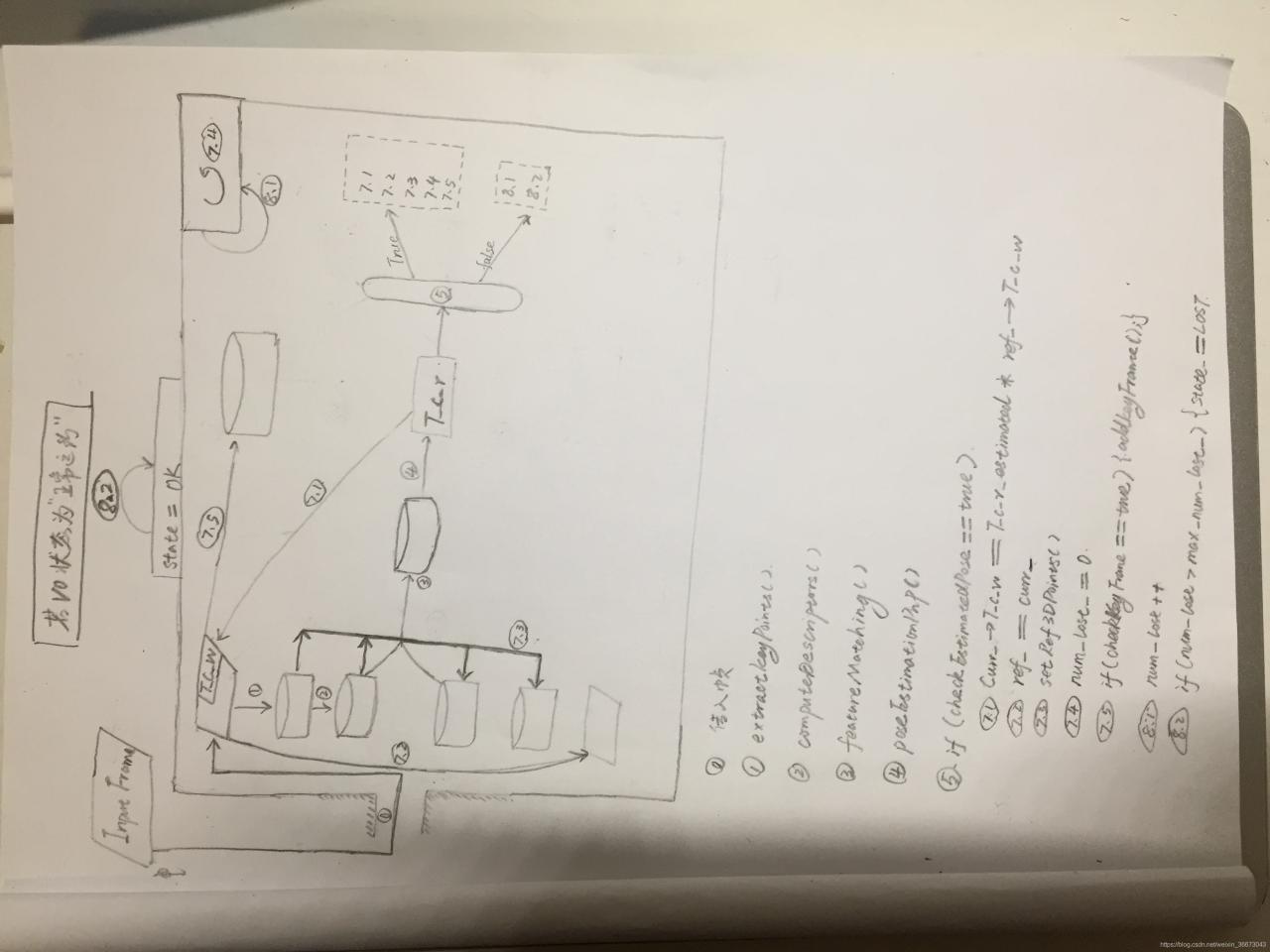

逻辑框架——流程图——>正常运转状态

头文件——/slam14/project/0.2/include/myslam/visual_odometry.h

/*

* <one line to give the program's name and a brief idea of what it does.>

* Copyright (C) 2016 <copyright holder> <email>

*

* This program is free software: you can redistribute it and/or modify

* it under the terms of the GNU General Public License as published by

* the Free Software Foundation, either version 3 of the License, or

* (at your option) any later version.

*

* This program is distributed in the hope that it will be useful,

* but WITHOUT ANY WARRANTY; without even the implied warranty of

* MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

* GNU General Public License for more details.

*

* You should have received a copy of the GNU General Public License

* along with this program. If not, see <http://www.gnu.org/licenses/>.

*

*/

#ifndef VISUALODOMETRY_H

#define VISUALODOMETRY_H

#include "myslam/common_include.h"

#include "myslam/map.h"

#include <opencv2/features2d/features2d.hpp>

namespace myslam

{

class VisualOdometry

{

public:

typedef shared_ptr<VisualOdometry> Ptr;

//枚举表征VO状态,初始化、正常、丢失

enum VOState {

INITIALIZING=-1,

OK=0,

LOST

};

//VO状态、地图(关键帧和特征点)、参考帧、当前帧

VOState state_; // current VO status

Map::Ptr map_; // map with all frames and map points

Frame::Ptr ref_; // reference frame

Frame::Ptr curr_; // current frame

//orb、当前帧特征点KeyPoint、当前帧描述子、参考帧3D坐标Point3f、参考帧描述子、匹配关系

cv::Ptr<cv::ORB> orb_; // orb detector and computer

// 当前帧的关键点,描述子存放位置

vector<cv::KeyPoint> keypoints_curr_; // keypoints in current frame

Mat descriptors_curr_; // descriptor in current frame

// 引用帧关键点,描述子存放位置

vector<cv::Point3f> pts_3d_ref_; // 3d points in reference frame

Mat descriptors_ref_; // descriptor in reference frame

// 当前帧vs引用帧匹配结果存放位置

vector<cv::DMatch> feature_matches_;

//位姿估计T、内点数(即为匹配出的关键点数量)、丢失数

SE3 T_c_r_estimated_; // the estimated pose of current frame 当前帧相对于参考帧的T

int num_inliers_; // number of inlier features in icp

int num_lost_; // number of lost times

//参数,特征数量、尺度、好匹配比率、最大持续丢失时间、最小内点数

// parameters

int num_of_features_; // number of features

double scale_factor_; // scale in image pyramid=图像金字塔比例尺

int level_pyramid_; // number of pyramid levels=金字塔层数

float match_ratio_; // ratio for selecting good matches = good matches 占比?

int max_num_lost_; // max number of continuous lost times=最大连续丢失帧数

int min_inliers_; // minimum inliers=

//关键帧筛选标准:最小旋转量&最小平移量,只要T满足其中一个即可

double key_frame_min_rot; // minimal rotation of two key-frames

double key_frame_min_trans; // minimal translation of two key-frames

public: // functions

VisualOdometry();

~VisualOdometry();

//核心,添加新关键帧

bool addFrame( Frame::Ptr frame ); // add a new frame

protected:

// inner operation

// 内部处理函数,特征匹配

void extractKeyPoints();

void computeDescriptors();

void featureMatching();

void poseEstimationPnP();

void setRef3DPoints();

// 关键帧的功能函数

void addKeyFrame();

bool checkEstimatedPose();

bool checkKeyFrame();

};

}

#endif // VISUALODOMETRY_H

源文件——/slam14/project/0.2/src/visual_odometry.cpp

/*

* <one line to give the program's name and a brief idea of what it does.>

* Copyright (C) 2016 <copyright holder> <email>

*

* This program is free software: you can redistribute it and/or modify

* it under the terms of the GNU General Public License as published by

* the Free Software Foundation, either version 3 of the License, or

* (at your option) any later version.

*

* This program is distributed in the hope that it will be useful,

* but WITHOUT ANY WARRANTY; without even the implied warranty of

* MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

* GNU General Public License for more details.

*

* You should have received a copy of the GNU General Public License

* along with this program. If not, see <http://www.gnu.org/licenses/>.

*

*/

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <opencv2/calib3d/calib3d.hpp>

#include <algorithm>

#include <boost/timer.hpp>

#include "myslam/config.h"

#include "myslam/visual_odometry.h"

namespace myslam

{

VisualOdometry::VisualOdometry() :

state_ ( INITIALIZING ), ref_ ( nullptr ), curr_ ( nullptr ), map_ ( new Map ), num_lost_ ( 0 ), num_inliers_ ( 0 )

{

num_of_features_ = Config::get<int> ( "number_of_features" );// 特征点数量

scale_factor_ = Config::get<double> ( "scale_factor" ); // scale in image pyramid=图像金字塔比例尺

level_pyramid_ = Config::get<int> ( "level_pyramid" );// number of pyramid levels=金字塔层数

match_ratio_ = Config::get<float> ( "match_ratio" );// ?????ratio for selecting good matches = good matches 占比?

max_num_lost_ = Config::get<float> ( "max_num_lost" );// max number of continuous lost times=最大连续丢失帧数

min_inliers_ = Config::get<int> ( "min_inliers" );// 最少的内点数量???????

key_frame_min_rot = Config::get<double> ( "keyframe_rotation" );// ?????关键帧旋转??????

key_frame_min_trans = Config::get<double> ( "keyframe_translation" );// # ????关键帧变换??????

orb_ = cv::ORB::create ( num_of_features_, scale_factor_, level_pyramid_ );

}

VisualOdometry::~VisualOdometry()

{

}

bool VisualOdometry::addFrame ( Frame::Ptr frame )

{

switch ( state_ )

{

//1. 初始化状态,第一帧

case INITIALIZING://状态:初始化

{

state_ = OK;

curr_ = ref_ = frame;//当前帧和参考帧都是第一帧,实际上当前帧指向NULL也是行的。

map_->insertKeyFrame ( frame );//将此帧插入地图中

// extract features from first frame

//提取关键点和描述子

extractKeyPoints();//初始帧(当前帧)提取特征点

computeDescriptors();// 初始帧(当前帧)计算描述子

//当前帧转化成引用帧(说实话,我觉得这个函数没有写好,语义不是很清楚,语义介于“引用的坐标2D-->3D”和“利用当前帧生成引用帧”)

setRef3DPoints();// compute the 3d position of features in ref frame

break;

}

case OK:

{

curr_ = frame;

extractKeyPoints();//当前帧提取特征点

computeDescriptors();//当前帧计算描述子

//特征匹配并计算相机位姿 T

featureMatching();//当前帧vs引用帧,结果放入feature_matches_中

poseEstimationPnP();//pnp方法进行位姿估计(说明:未利用当前帧的深度信息)

//位姿估计好

if ( checkEstimatedPose() == true ) // a good estimation

{

//当前位姿 Tcw = Tcr*Trw

curr_->T_c_w_ = T_c_r_estimated_ * ref_->T_c_w_; // T_c_w = T_c_r*T_r_w

ref_ = curr_;//当前帧为参考帧

setRef3DPoints();//参考帧特征点的3D坐标,补全depth数据

num_lost_ = 0;//num_lost重置为0。

//检查是否为关键帧

if ( checkKeyFrame() == true ) // is a key-frame

{

addKeyFrame();

}

}

else // bad estimation due to various reasons

//位姿估计坏,丢失点计数+1,判断是否大于最大丢失数,是则VO状态切换为lost

{

num_lost_++;

if ( num_lost_ > max_num_lost_ )

{

state_ = LOST;

}

return false;

}

break;

}

case LOST://3. 匹配失败状态

{

cout<<"vo has lost."<<endl;

break;

}

}

return true;

}

// 当前帧提取特征点

void VisualOdometry::extractKeyPoints()

{

orb_->detect ( curr_->color_, keypoints_curr_ );//从curr_->color_中检测出keypoint放入keypoints_curr_

}

// 当前帧计算描述子

void VisualOdometry::computeDescriptors()

{

orb_->compute ( curr_->color_, keypoints_curr_, descriptors_curr_ );//计算curr_->color_中检测出的keypoint的descriptor

}

// 特征点匹配

void VisualOdometry::featureMatching()

{

// 关键点匹配:match desp_ref and desp_curr, use OpenCV's brute force match

vector<cv::DMatch> matches;//matches----->匹配结果存放地点(筛选前))

cv::BFMatcher matcher ( cv::NORM_HAMMING );

matcher.match ( descriptors_ref_, descriptors_curr_, matches );

// 关键点筛选:select the best matches

//找到matches数组中最小距离,赋值给min_dist

float min_dis = std::min_element (

matches.begin(), matches.end(),

[] ( const cv::DMa tch& m1, const cv::DMatch& m2 )

{

return m1.distance < m2.distance;

} )->distance;

feature_matches_.clear();//清除上次匹配结果

//根据距离筛选特征点

for ( cv::DMatch& m : matches )

{

if ( m.distance < max<float> ( min_dis*match_ratio_, 30.0 ) )

{

feature_matches_.push_back(m);//符合条件的匹配放入feature_matches_

}

}

cout<<"good matches: "<<feature_matches_.size()<<endl;

}

// 引用帧3D点设置

//pnp需要参考帧3D,当前帧2D,所以当前帧迭代为参考帧时,需要加上depth数据

// 每轮循环结束,都需要把当前帧变成参考帧,这时候就需要把当前帧的坐标的2D形式转化成3D形式

void VisualOdometry::setRef3DPoints()

{

// select the features with depth measurements

pts_3d_ref_.clear();

descriptors_ref_ = Mat();

//遍历当前关键点数组遍历

for ( size_t i=0; i<keypoints_curr_.size(); i++ )

{

//找到depth数据

double d = ref_->findDepth(keypoints_curr_[i]);

if ( d > 0)

{

//像素坐标到相机坐标系3D坐标

Vector3d p_cam = ref_->camera_->pixel2camera(//这一块,用curr_->camera也是可以的,因为相机是一样的

Vector2d(keypoints_curr_[i].pt.x, keypoints_curr_[i].pt.y), d

);

//构造Point3f,3D坐标

pts_3d_ref_.push_back( cv::Point3f( p_cam(0,0), p_cam(1,0), p_cam(2,0) ));

//参考帧描述子,将当前帧描述子按行放进去。

descriptors_ref_.push_back(descriptors_curr_.row(i));

}

}

}

// 使用pnp进行位置估计

// PnP估计相机位姿T

// 使用pts_3d_ref_ 、keypoints_curr_、feature_matches_、相机参数----->estimate出相机的T

void VisualOdometry::poseEstimationPnP()

{

// construct the 3d 2d observations

//参考帧3D坐标和当前帧2D坐标(需要.pt像素坐标)

vector<cv::Point3f> pts3d;

vector<cv::Point2f> pts2d;

for ( cv::DMatch m:feature_matches_ )

{

pts3d.push_back( pts_3d_ref_[m.queryIdx] );//query=ref

pts2d.push_back( keypoints_curr_[m.trainIdx].pt );//train=curr

}

//相机内参不变

Mat K = ( cv::Mat_<double>(3,3)<<

ref_->camera_->fx_, 0, ref_->camera_->cx_,

0, ref_->camera_->fy_, ref_->camera_->cy_,

0,0,1

);

//旋转、平移、内点数组

Mat rvec, tvec, inliers;

//核心函数

cv::solvePnPRansac( pts3d, pts2d, K, Mat(), rvec, tvec, false, 100, 4.0, 0.99, inliers );

// 猜测内点数,应该是匹配出的特征点中真正被位姿估计函数使用的那些点

num_inliers_ = inliers.rows;

cout<<"pnp inliers: "<<num_inliers_<<endl;

//由旋转和平移构造出当前帧相对于参考帧的T

T_c_r_estimated_ = SE3(

SO3(rvec.at<double>(0,0), rvec.at<double>(1,0), rvec.at<double>(2,0)),

Vector3d( tvec.at<double>(0,0), tvec.at<double>(1,0), tvec.at<double>(2,0))

);

}

// 位置估计检查,

//位姿检验模块,匹配点不能太少,运动不能太大

bool VisualOdometry::checkEstimatedPose()

{

// check if the estimated pose is good

//匹配点太少,false

if ( num_inliers_ < min_inliers_ )

{

cout<<"reject because inlier is too small: "<<num_inliers_<<endl;

return false;

}

// if the motion is too large, it is probably wrong

//T的模太大,false

Sophus::Vector6d d = T_c_r_estimated_.log();

if ( d.norm() > 5.0 )

{

cout<<"reject because motion is too large: "<<d.norm()<<endl;

return false;

}

return true;

}

// 检查关键帧,T中R或t比较大都是关键帧

bool VisualOdometry::checkKeyFrame()

{

Sophus::Vector6d d = T_c_r_estimated_.log();

Vector3d trans = d.head<3>();

Vector3d rot = d.tail<3>();

if ( rot.norm() >key_frame_min_rot || trans.norm() >key_frame_min_trans )

return true;

return false;

}

// 添加关键帧

void VisualOdometry::addKeyFrame()

{

cout<<"adding a key-frame"<<endl;

map_->insertKeyFrame ( curr_ );

}

}非常糙的初步版本,后面有时间再优化!

版权声明:本文为weixin_36673043原创文章,遵循 CC 4.0 BY-SA 版权协议,转载请附上原文出处链接和本声明。