- 基于经典网络架构训练图像分类模型¶

数据预处理部分:

数据增强:torchvision中transforms模块自带功能,比较实用

数据预处理:torchvision中transforms也帮我们实现好了,直接调用即可

DataLoader模块直接读取batch数据

- 网络模块设置:

加载预训练模型,torchvision中有很多经典网络架构,调用起来十分方便,并且可以用人家训练好的权重参数来继续训练,也就是所谓的迁移学习

需要注意的是别人训练好的任务跟咱们的可不是完全一样,需要把最后的head层改一改,一般也就是最后的全连接层,改成咱们自己的任务

训练时可以全部重头训练,也可以只训练最后咱们任务的层,因为前几层都是做特征提取的,本质任务目标是一致的

- 网络模型保存与测试

模型保存的时候可以带有选择性,例如在验证集中如果当前效果好则保存

读取模型进行实际测试

1 模块导入

import os

import matplotlib.pyplot as plt

%matplotlib inline

import numpy as np

import torch

from torch import nn

import torch.optim as optim

import torchvision

#pip install torchvision

from torchvision import transforms, models, datasets

#https://pytorch.org/docs/stable/torchvision/index.html

#imageio:一个简单的接口来读取和写入各种图像数据

#sys:该模块提供对解释器使用或维护的一些变量的访问,以及与解释器强烈交互的函数

#json:使用 json 模块来对 JSON 数据进行编解码

#PIL是Python平台事实上的图像处理标准库,支持多种格式,并提供强大的图形与图像处理功能。

import imageio

import time

import warnings

import random

import sys

import copy

import json

from PIL import Image

2 数据读取与预处理操作

#路径设置

data_dir = './flower_data/'

train_dir = data_dir + '/train'

valid_dir = data_dir + '/valid'

2.1制作好数据源:

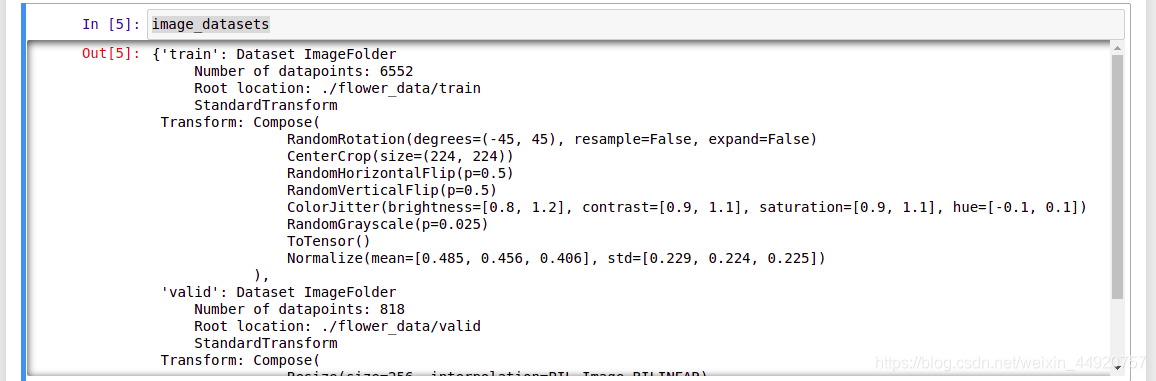

data_transforms中指定了所有图像预处理操作

ImageFolder假设所有的文件按文件夹保存好,每个文件夹下面存贮同一类别的图片,文件夹的名字为分类的名字

#https://blog.csdn.net/weixin_43135178/article/details/115133115

data_transforms = {

#训练集

'train': transforms.Compose([transforms.RandomRotation(45),#随机旋转,-45到45度之间随机选

transforms.CenterCrop(224),#从中心开始裁剪

#P表示概率,有百分之50的概率反转,剩下不反转

transforms.RandomHorizontalFlip(p=0.5),#随机水平翻转 选择一个概率概率

transforms.RandomVerticalFlip(p=0.5),#随机垂直翻转

transforms.ColorJitter(brightness=0.2, contrast=0.1, saturation=0.1, hue=0.1),#参数1为亮度,参数2为对比度,参数3为饱和度,参数4为色相

transforms.RandomGrayscale(p=0.025),#概率转换成灰度率,3通道就是R=G=B

#将h,w,c[0.255]变为c,h,w[0.0,1.0]

transforms.ToTensor(),

#下面是归一化,前面是减均值,后面是比标准差

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])#均值,标准差

]),

#验证集不需要进行数据增强

'valid': transforms.Compose([transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

}

batch_size = 8

image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir,x), data_transforms[x]) for x in ['train', 'valid']}

dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=batch_size, shuffle=True) for x in ['train', 'valid']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'valid']}

class_names = image_datasets['train'].classes

#查看

image_datasets

dataloaders

dataset_sizes

2.2 读取标签对应的实际名字

with open('cat_to_name.json', 'r') as f:

cat_to_name = json.load(f)

#查看

cat_to_name

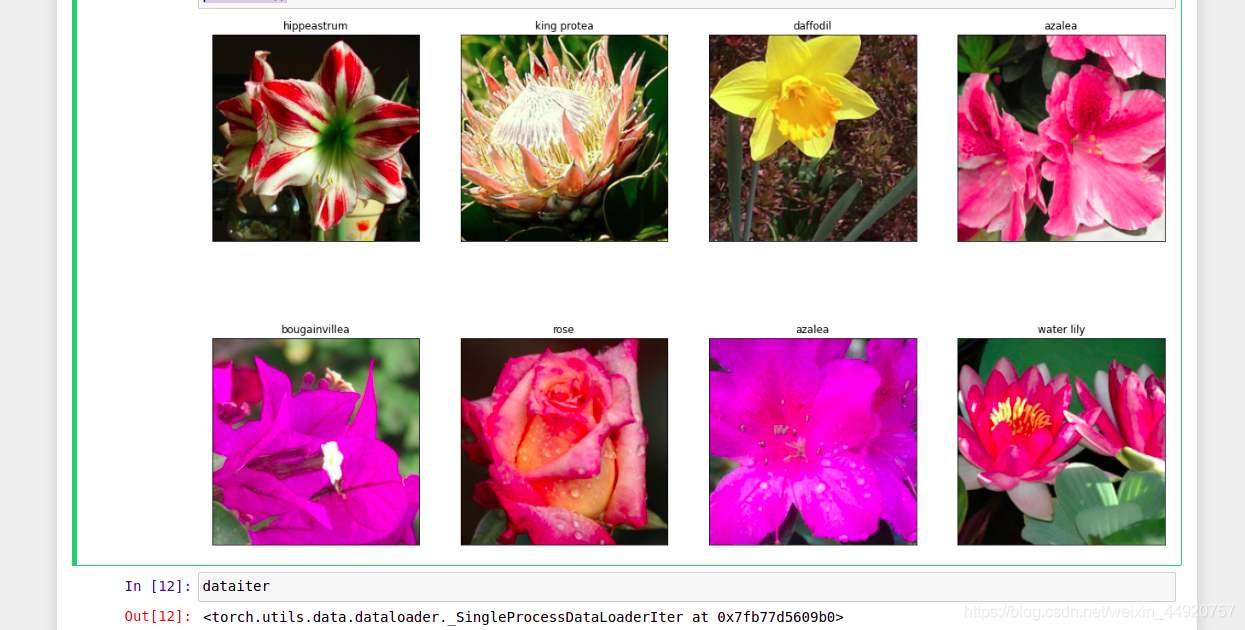

2.3 展示下数据

注意tensor的数据需要转换成numpy的格式,而且还需要还原回标准化的结果

def im_convert(tensor):

""" 展示数据"""

image = tensor.to("cpu").clone().detach()

image = image.numpy().squeeze()

#下面将图像还原回去,利用squeeze()函数将表示向量的数组转换为秩为1的数组,这样利用matplotlib库函数画图

#transpose是调换位置,之前是换成了(c,h,w),需要重新还为(h,w,c)

image = image.transpose(1,2,0)

image = image * np.array((0.229, 0.224, 0.225)) + np.array((0.485, 0.456, 0.406))

#clip的作用是小于0的都换成0,大于1的都变成1

image = image.clip(0, 1)

return image

fig=plt.figure(figsize=(20, 12))

columns = 4

rows = 2

#iter 迭代器

dataiter = iter(dataloaders['valid'])

inputs, classes = dataiter.next()

for idx in range (columns*rows):

ax = fig.add_subplot(rows, columns, idx+1, xticks=[], yticks=[])

ax.set_title(cat_to_name[str(int(class_names[classes[idx]]))])

plt.imshow(im_convert(inputs[idx]))

plt.show()

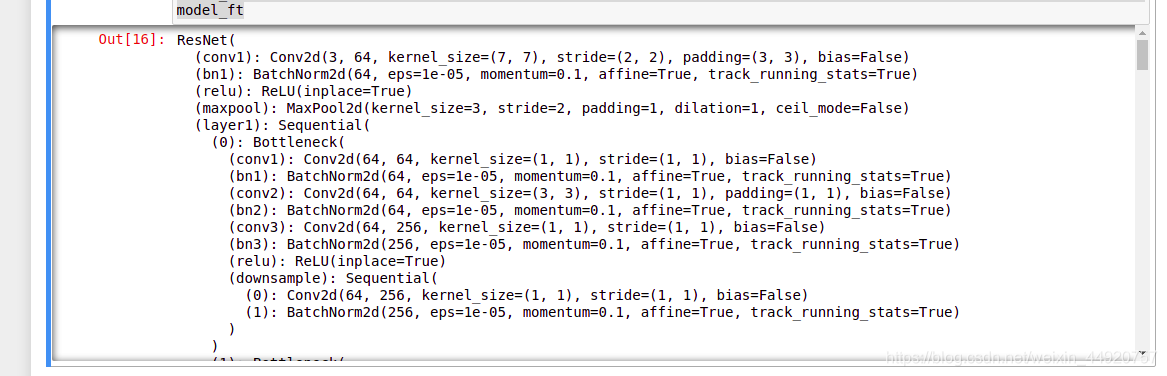

3加载models中提供的模型直接用训练的好权重当做初始化参数

第一次执行需要下载,可能会比较慢,我会提供给大家一份下载好的,可以直接放到相应路径

model_name = 'resnet' #可选的比较多 ['resnet', 'alexnet', 'vgg', 'squeezenet', 'densenet', 'inception']

#是否用人家训练好的特征来做

feature_extract = True

# 是否用GPU训练,GPU当前是否可用

train_on_gpu = torch.cuda.is_available()

if not train_on_gpu:

print('CUDA is not available. Training on CPU ...')

else:

print('CUDA is available! Training on GPU ...')

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

用现成的特征提取,只训练FC

def set_parameter_requires_grad(model, feature_extracting):

if feature_extracting:

for param in model.parameters():

#梯度更新改为False,相当于冻住,模型(resnet)的参数不更新

param.requires_grad = False

#查看resnet

model_ft = models.resnet152()

model_ft

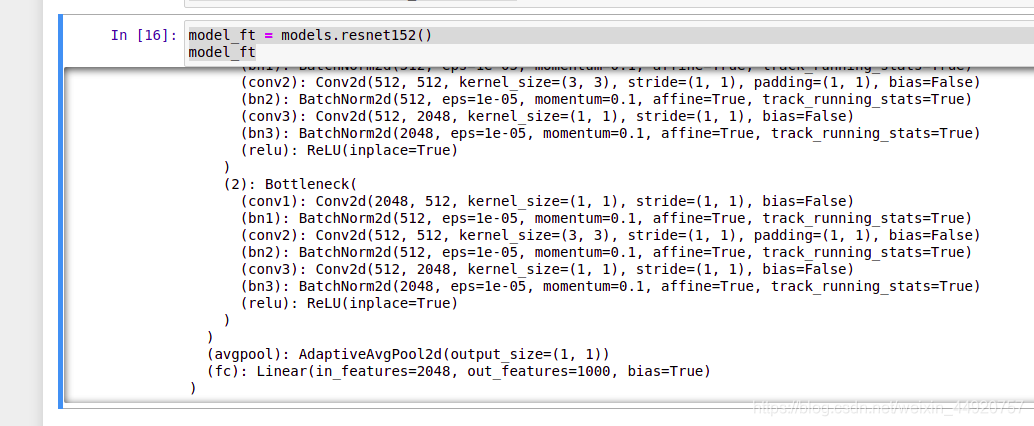

3.1参考pytorch官网例子

def initialize_model(model_name, num_classes, feature_extract, use_pretrained=True):

# 选择合适的模型,不同模型的初始化方法稍微有点区别

model_ft = None

input_size = 0

if model_name == "resnet":

""" Resnet152

"""

#下面是自动下载的resnet的代码,加载预训练网络

model_ft = models.resnet152(pretrained=use_pretrained)

#是否将特征提取的模块冻住,只训练FC层

set_parameter_requires_grad(model_ft, feature_extract)

#获取全连接层输入特征

num_ftrs = model_ft.fc.in_features

#从新加全连接层,从新设置,输出102

model_ft.fc = nn.Sequential(nn.Linear(num_ftrs, 102),

nn.LogSoftmax(dim=1))#dim=0表示对列运算(1是对行运算),且元素和为1;

input_size = 224

elif model_name == "alexnet":

""" Alexnet

"""

model_ft = models.alexnet(pretrained=use_pretrained)

set_parameter_requires_grad(model_ft, feature_extract)

num_ftrs = model_ft.classifier[6].in_features

model_ft.classifier[6] = nn.Linear(num_ftrs,num_classes)

input_size = 224

elif model_name == "vgg":

""" VGG11_bn

"""

model_ft = models.vgg16(pretrained=use_pretrained)

set_parameter_requires_grad(model_ft, feature_extract)

num_ftrs = model_ft.classifier[6].in_features

model_ft.classifier[6] = nn.Linear(num_ftrs,num_classes)

input_size = 224

elif model_name == "squeezenet":

""" Squeezenet

"""

model_ft = models.squeezenet1_0(pretrained=use_pretrained)

set_parameter_requires_grad(model_ft, feature_extract)

model_ft.classifier[1] = nn.Conv2d(512, num_classes, kernel_size=(1,1), stride=(1,1))

model_ft.num_classes = num_classes

input_size = 224

elif model_name == "densenet":

""" Densenet

"""

model_ft = models.densenet121(pretrained=use_pretrained)

set_parameter_requires_grad(model_ft, feature_extract)

num_ftrs = model_ft.classifier.in_features

model_ft.classifier = nn.Linear(num_ftrs, num_classes)

input_size = 224

elif model_name == "inception":

""" Inception v3

Be careful, expects (299,299) sized images and has auxiliary output

"""

model_ft = models.inception_v3(pretrained=use_pretrained)

set_parameter_requires_grad(model_ft, feature_extract)

# Handle the auxilary net

num_ftrs = model_ft.AuxLogits.fc.in_features

model_ft.AuxLogits.fc = nn.Linear(num_ftrs, num_classes)

# Handle the primary net

num_ftrs = model_ft.fc.in_features

model_ft.fc = nn.Linear(num_ftrs,num_classes)

input_size = 299

else:

print("Invalid model name, exiting...")

exit()

return model_ft, input_size

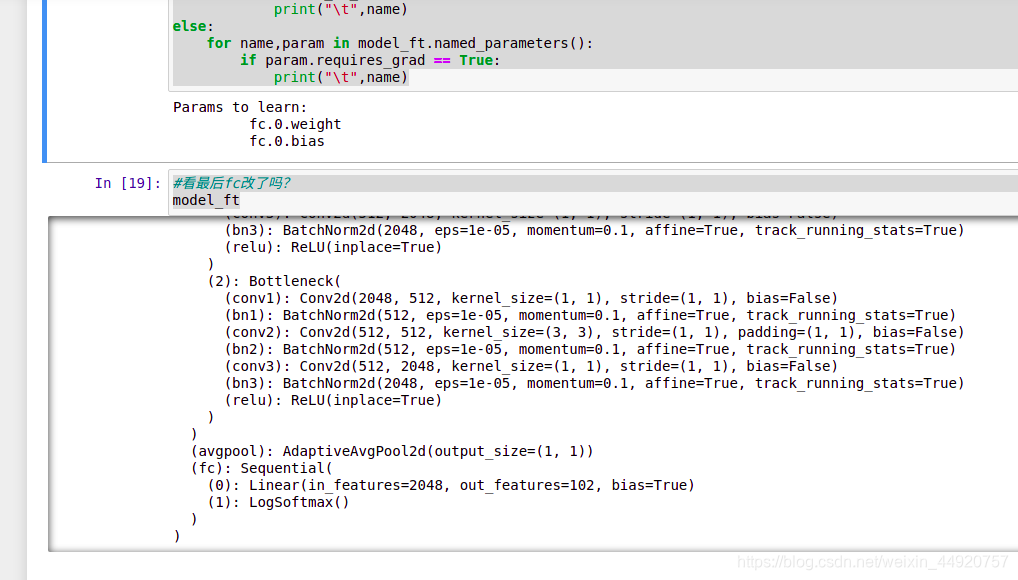

3.2设置哪些层需要训练

model_ft, input_size = initialize_model(model_name, 102, feature_extract, use_pretrained=True)

#GPU计算

model_ft = model_ft.to(device)

# 模型保存,checkpoint是已经训练好的模型,可以直接读取

filename='checkpoint.pth'

# 是否训练所有层,只训练FC层,其他不动

params_to_update = model_ft.parameters()

print("Params to learn:")

if feature_extract:

params_to_update = []

for name,param in model_ft.named_parameters():

if param.requires_grad == True:

params_to_update.append(param)

print("\t",name)

else:

for name,param in model_ft.named_parameters():

if param.requires_grad == True:

print("\t",name)

与上图对比,注意全连接层

4.训练与预测

4.1优化器设置

# 优化器设置

optimizer_ft = optim.Adam(params_to_update, lr=1e-2)

#学习率衰减策略

scheduler = optim.lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)#学习率每7个epoch衰减成原来的1/10

#最后一层已经LogSoftmax()了,所以不能nn.CrossEntropyLoss()来计算了,nn.CrossEntropyLoss()相当于logSoftmax()和nn.NLLLoss()整合

criterion = nn.NLLLoss()

4.2训练模块

#is_inception:要不要用其他的网络

def train_model(model, dataloaders, criterion, optimizer, num_epochs=10, is_inception=False,filename=filename):

since = time.time()

#保存最好的准确率

best_acc = 0

"""

checkpoint = torch.load(filename)

best_acc = checkpoint['best_acc']

model.load_state_dict(checkpoint['state_dict'])

optimizer.load_state_dict(checkpoint['optimizer'])

model.class_to_idx = checkpoint['mapping']

"""

#指定用GPU还是CPU

model.to(device)

#下面是为展示做的

val_acc_history = []

train_acc_history = []

train_losses = []

valid_losses = []

LRs = [optimizer.param_groups[0]['lr']]

#最好的一次存下来

best_model_wts = copy.deepcopy(model.state_dict())

for epoch in range(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs - 1))

print('-' * 10)

# 训练和验证

for phase in ['train', 'valid']:

if phase == 'train':

model.train() # 训练

else:

model.eval() # 验证

running_loss = 0.0

running_corrects = 0

# 把数据都取个遍

for inputs, labels in dataloaders[phase]:

#下面是将inputs,labels传到GPU

inputs = inputs.to(device)

labels = labels.to(device)

# 清零

optimizer.zero_grad()

# 只有训练的时候计算和更新梯度

#orch.set_grad_enabled()在使用的时候是设置一个上下文环境,也就是说只要设置了torch.set_grad_enabled(False)那么接下来所有的tensor运算产生的新的节点都是不可求导的,

#https://blog.csdn.net/zzzpy/article/details/88873109

with torch.set_grad_enabled(phase == 'train'):

#if这面不需要计算,可忽略

if is_inception and phase == 'train':

outputs, aux_outputs = model(inputs)

loss1 = criterion(outputs, labels)

loss2 = criterion(aux_outputs, labels)

loss = loss1 + 0.4*loss2

else:#resnet执行的是这里

outputs = model(inputs)

loss = criterion(outputs, labels)

#概率最大的返回preds

_, preds = torch.max(outputs, 1)

# 训练阶段更新权重

if phase == 'train':

loss.backward()

optimizer.step()

# 计算损失

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(preds == labels.data)

#打印操作

epoch_loss = running_loss / len(dataloaders[phase].dataset)

epoch_acc = running_corrects.double() / len(dataloaders[phase].dataset)

time_elapsed = time.time() - since

print('Time elapsed {:.0f}m {:.0f}s'.format(time_elapsed // 60, time_elapsed % 60))

print('{} Loss: {:.4f} Acc: {:.4f}'.format(phase, epoch_loss, epoch_acc))

# 得到最好那次的模型

if phase == 'valid' and epoch_acc > best_acc:

best_acc = epoch_acc

#模型保存

best_model_wts = copy.deepcopy(model.state_dict())

state = {

#tate_dict变量存放训练过程中需要学习的权重和偏执系数

'state_dict': model.state_dict(),

'best_acc': best_acc,

'optimizer' : optimizer.state_dict(),

}

torch.save(state, filename)

if phase == 'valid':

val_acc_history.append(epoch_acc)

valid_losses.append(epoch_loss)

scheduler.step(epoch_loss)

if phase == 'train':

train_acc_history.append(epoch_acc)

train_losses.append(epoch_loss)

print('Optimizer learning rate : {:.7f}'.format(optimizer.param_groups[0]['lr']))

LRs.append(optimizer.param_groups[0]['lr'])

print()

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}s'.format(time_elapsed // 60, time_elapsed % 60))

print('Best val Acc: {:4f}'.format(best_acc))

# 训练完后用最好的一次当做模型最终的结果

model.load_state_dict(best_model_wts)

return model, val_acc_history, train_acc_history, valid_losses, train_losses, LRs

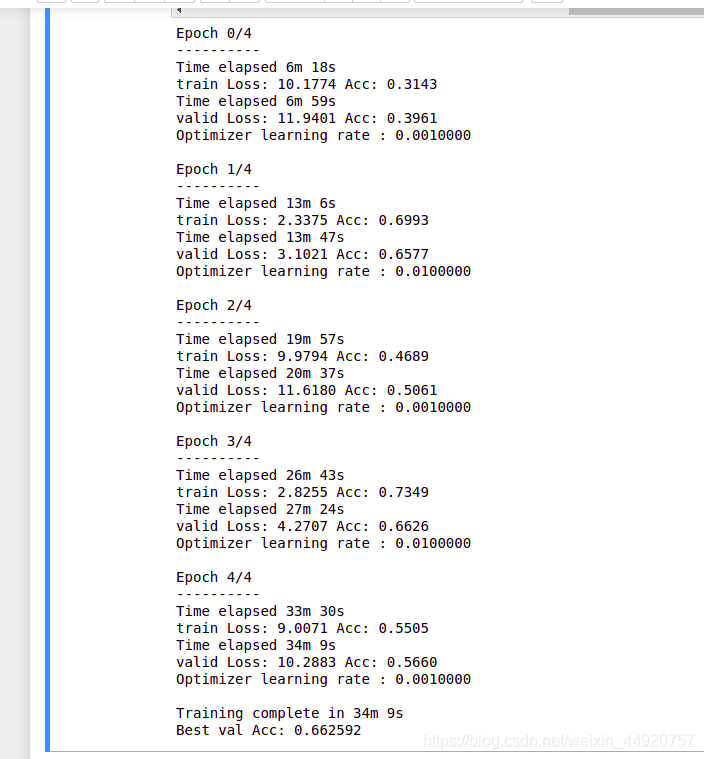

4.3开始训练!

#若太慢,把epoch调低,迭代50次可能好些

#训练时,损失是否下降,准确是否有上升;验证与训练差距大吗?若差距大,就是过拟合

model_ft, val_acc_history, train_acc_history, valid_losses, train_losses, LRs = train_model(model_ft, dataloaders, criterion, optimizer_ft, num_epochs=5, is_inception=(model_name=="inception"))

4.4再继续训练所有层

#全部网络训练

for param in model_ft.parameters():

param.requires_grad = True

# 再继续训练所有的参数,学习率调小一点

optimizer = optim.Adam(params_to_update, lr=1e-4)

scheduler = optim.lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)

# 损失函数

criterion = nn.NLLLoss()

# Load the checkpoint

#在之前训练好的基础上进行训练

#下面的路径保存的是刚刚训练的还不错的路径

checkpoint = torch.load(filename)

best_acc = checkpoint['best_acc']

model_ft.load_state_dict(checkpoint['state_dict'])

optimizer.load_state_dict(checkpoint['optimizer'])

#model_ft.class_to_idx = checkpoint['mapping']

model_ft, val_acc_history, train_acc_history, valid_losses, train_losses, LRs = train_model(model_ft, dataloaders, criterion, optimizer, num_epochs=10, is_inception=(model_name=="inception"))

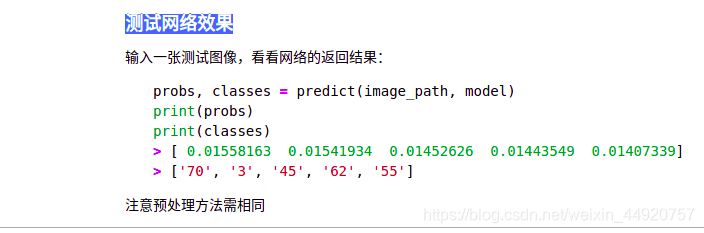

4.5测试网络效果

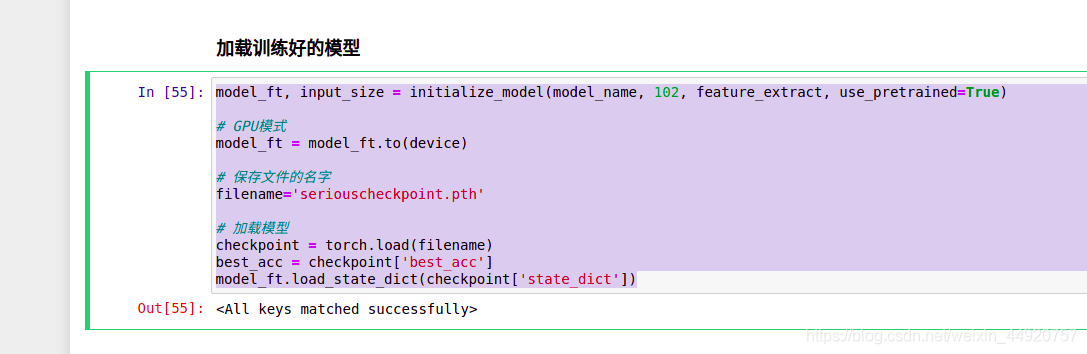

4.6 加载训练好的模型

model_ft, input_size = initialize_model(model_name, 102, feature_extract, use_pretrained=True)

# GPU模式

model_ft = model_ft.to(device)

# 保存文件的名字

filename='seriouscheckpoint.pth'

# 加载模型

checkpoint = torch.load(filename)

best_acc = checkpoint['best_acc']

model_ft.load_state_dict(checkpoint['state_dict'])

4.7测试数据预处理

-

测试数据处理方法需要跟训练时一直才可以 crop操作的目的是保证输入的大小是一致的

-

标准化操作也是必须的,用跟训练数据相同的mean和std,但是需要注意一点训练数据是在0-1上进行标准化,所以测试数据也需要先归一化

-

最后一点,PyTorch中颜色通道是第一个维度,跟很多工具包都不一样,需要转换

def process_image(image_path):

# 读取测试数据

img = Image.open(image_path)

# Resize,thumbnail方法只能进行比例缩小,所以进行了判断

#https://blog.csdn.net/kethur/article/details/79992539#commentBox

if img.size[0] > img.size[1]:

img.thumbnail((10000, 256))

else:

img.thumbnail((256, 10000))

# Crop操作,将图像再次裁减224*224

left_margin = (img.width-224)/2

bottom_margin = (img.height-224)/2

right_margin = left_margin + 224

top_margin = bottom_margin + 224

#https://blog.csdn.net/weixin_41770169/article/details/94600505#commentBox

img = img.crop((left_margin, bottom_margin, right_margin,

top_margin))

# 相同的预处理方法

#归一化

img = np.array(img)/255

mean = np.array([0.485, 0.456, 0.406]) #provided mean

std = np.array([0.229, 0.224, 0.225]) #provided std

img = (img - mean)/std

# 注意颜色通道应该放在第一个位置,#注意通道位置,每个可能不一样

img = img.transpose((2, 0, 1))

return img

def imshow(image, ax=None, title=None):

"""展示数据"""

if ax is None:

fig, ax = plt.subplots()

# 颜色通道还原

image = np.array(image).transpose((1, 2, 0))

# 预处理还原

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

image = std * image + mean

image = np.clip(image, 0, 1)

ax.imshow(image)

ax.set_title(title)

return ax

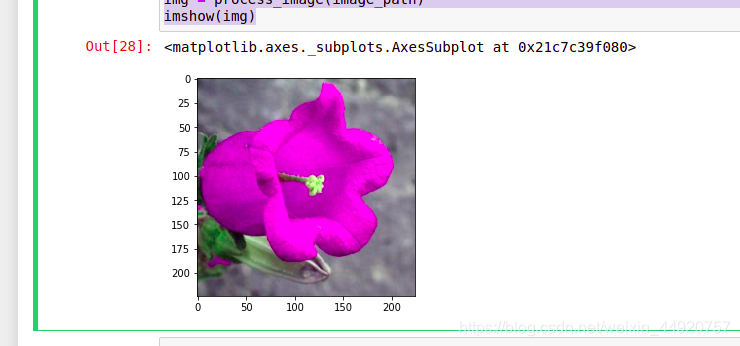

image_path = 'image_06621.jpg'

img = process_image(image_path)

imshow(img)

img.shape

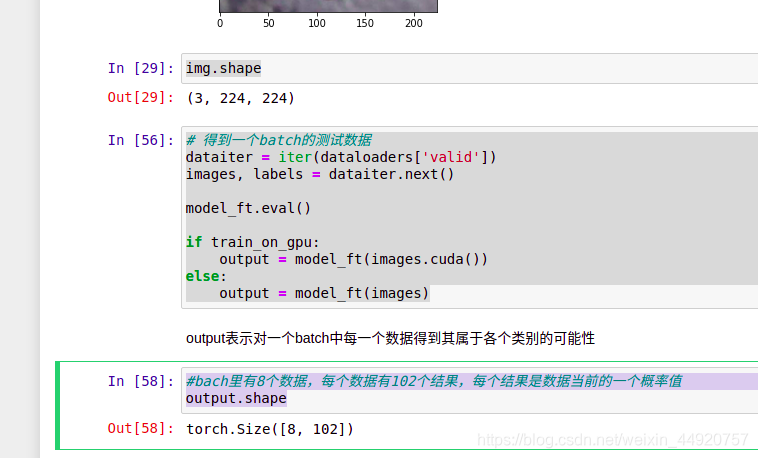

# 得到一个batch的测试数据

dataiter = iter(dataloaders['valid'])

images, labels = dataiter.next()

model_ft.eval()

if train_on_gpu:

output = model_ft(images.cuda())

else:

output = model_ft(images)

#bach里有8个数据,每个数据有102个结果,每个结果是数据当前的一个概率值

output.shape

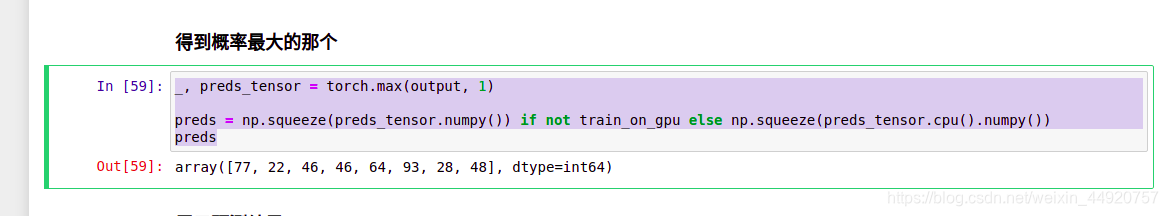

4.8得到概率最大的那个

_, preds_tensor = torch.max(output, 1)

preds = np.squeeze(preds_tensor.numpy()) if not train_on_gpu else np.squeeze(preds_tensor.cpu().numpy())

preds

4.9展示预测结果

fig=plt.figure(figsize=(20, 20))

columns =4

rows = 2

for idx in range (columns*rows):

ax = fig.add_subplot(rows, columns, idx+1, xticks=[], yticks=[])

plt.imshow(im_convert(images[idx]))

ax.set_title("{} ({})".format(cat_to_name[str(preds[idx])], cat_to_name[str(labels[idx].item())]),

color=("green" if cat_to_name[str(preds[idx])]==cat_to_name[str(labels[idx].item())] else "red"))

plt.show()

#绿的表示预测对的,红色表示预测错