首先我们知道

Hog特征结合

SVM分类器已经被广泛应用于图像识别中,尤其在行人检测中获得了极大的成功

,HOG+SVM进行行人检测的方法是法国研究人员

Dalal在

2005的

CVPR上提出的,而如今虽然有很多行人检测算法不断提出,但基本都是以

HOG+SVM的思路为主,那么PCL中也是利用这一思想来进行行人的检测,

总体思路:

1、提取正负样本hog特征

2、投入svm分类器训练,得到model

3、由model生成检测子

4、利用检测子检测负样本,得到hardexample

5、提取hardexample的hog特征并结合第一步中的特征一起投入训练,得到最终检测子。

首先整合一下理论知识

HOG特征:

方向梯度直方图(

Histogram of Oriented Gradient, HOG)特征是一种在计算机视觉和图像处理中用来进行物体检测的特征描述子。它通过计算和统计图像局部区域的梯度方向直方图来构成特征。

具体的是实现方法:

首先将图像分成小的连通区域,我们把它叫细胞单元。然后采集细胞单元中各像素点的梯度的或边缘的方向直方图。最后把这些直方图组合起来就可以构成特征描述器。

提高性能:

把这些局部直方图在图像的更大的范围内(我们把它叫区间或

block)进行对比度归一化(

contrast-normalized),所采用的方法是:先计算各直方图在这个区间(

block)中的密度,然后根据这个密度对区间中的各个细胞单元做归一化。通过这个归一化后,能对光照变化和阴影获得更好的效果。

优点:

与其他的特征描述方法相比,

HOG有很多优点。首先,由于

HOG是 在图像的局部方格单元上操作,所以它对图像几何的和光学的形变都能保持很好的不变性,这两种形变只会出现在更大的空间领域上。其次,在粗的空域抽样、精细 的方向抽样以及较强的局部光学归一化等条件下,只要行人大体上能够保持直立的姿势,可以容许行人有一些细微的肢体动作,这些细微的动作可以被忽略而不影响 检测效果。因此

HOG特征是特别适合于做图像中的人体检测的。

HOG特征提取算法的实现过程:

1)

HOG特征提取方法就是将一个

image(你要检测的目标或者扫描窗口)

灰度化(

x,y,z的三维图像);

2)采用

Gamma校正法对输入图像进行颜色空间的标准化(归一化);目的是调节图像的对比度,降低图像局部的阴影和光照变化所造成的影响,同时可以抑制噪音的干扰;

3)计算图像每个像素的梯度(包括大小和方向);主要是为了捕获轮廓信息,同时进一步弱化光照的干扰。

4)将图像划分成小

cells(例如

6*6像素

/cell);

5)统计每个

cell的梯度直方图(不同梯度的个数),即可形成每个

cell的

descriptor;

6)将每几个

cell组成一个

block(例如

3*3个

cell/block),一个

block内所有

cell的特征

descriptor串联起来便得到该

block的

HOG特征

descriptor。

7)将图像

image内的所有

block的

HOG特征

descriptor串联起来就可以得到该

image(你要检测的目标)的

HOG特征

descriptor了。这个就是最终的可供分类使用的特征向量了。

关于SVM可以参看www.opencv.org.cn/opencvdoc/2.3.2/html/doc/tutorials/ml/introduction_to_svm/introduction_to_svm.html

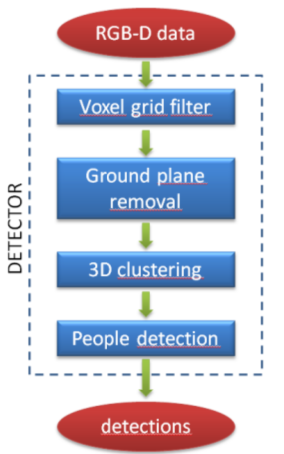

(2)那么使用PCL 检测行人该如何使用呢?

目标: detecting people walking on the ground plane

输入:RGBXYZ pointcloud ground coeficients

输出:people clusters

流程图:

步骤

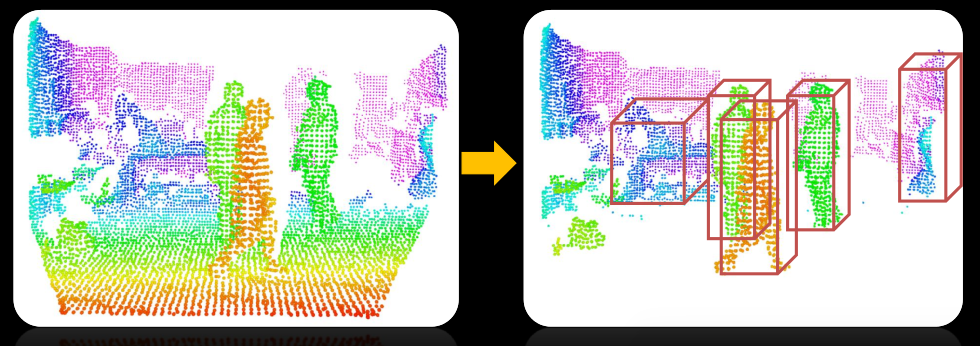

detected on point cloud

(1)groud plane estimation and removal

(2)Euclidean clustering of remaining points

(3)people vertically splitted int more clusters

sub-clustering procedure

(4)candidates pruning based on height from the ground plane

(5) merging of clusters close in groud plane coordinates

(6)Subdivision of big clusters by means of head detection

HOG+SVM evaluation

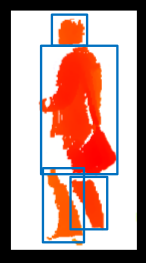

(7)candidate clusters are extened until the ground for achieving robustness to lower limbs occlusion

(8)HOG detector applied to image patches that are projection of 3Dclusters onto the RGB image

pcl::people代码的构架

GroundBasedPeopleDetectionAPP<PointT>

________________|_____________________

| | |

PersonClassifier<pcl::RGB> PersonCluster<PointT> headBasedSubclustering<pointT>

| |

HOG detector HeightMap2D<PointT>

代码解析

#include <pcl/console/parse.h> //命令行解析头文件 #include <pcl/point_types.h> //点云数据类型 #include <pcl/visualization/pcl_visualizer.h> //可视化头文件 #include <pcl/io/openni_grabber.h> //kinect抓取的头文件 #include <pcl/sample_consensus/sac_model_plane.h> //sac_model_plane随机采样一致形平面的模板 #include <pcl/people/ground_based_people_detection_app.h> #include <pcl/common/time.h> typedef pcl::PointXYZRGBA PointT; typedef pcl::PointCloud<PointT> PointCloudT; // PCL viewer // pcl::visualization::PCLVisualizer viewer("PCL Viewer"); //可视化 // Mutex: // boost::mutex cloud_mutex; enum { COLS = 640, ROWS = 480 }; //窗口大小 int print_help() { cout << "*******************************************************" << std::endl; cout << "Ground based people detection app options:" << std::endl; cout << " --help <show_this_help>" << std::endl; cout << " --svm <path_to_svm_file>" << std::endl; cout << " --conf <minimum_HOG_confidence (default = -1.5)>" << std::endl; cout << " --min_h <minimum_person_height (default = 1.3)>" << std::endl; cout << " --max_h <maximum_person_height (default = 2.3)>" << std::endl; cout << " --sample <sampling_factor (default = 1)>" << std::endl; cout << "*******************************************************" << std::endl; return 0; } void cloud_cb_ (const PointCloudT::ConstPtr &callback_cloud, PointCloudT::Ptr& cloud, bool* new_cloud_available_flag) { cloud_mutex.lock (); // for not overwriting the point cloud from another thread锁住进程防止从其他进程写入点云 *cloud = *callback_cloud; //形参点云赋值 *new_cloud_available_flag = true; //标志位赋值为真 cloud_mutex.unlock (); //解锁进程 } //申明结构体用于传递参数给回调函数 struct callback_args{ // structure used to pass arguments to the callback function PointCloudT::Ptr clicked_points_3d; //按键三次 pcl::visualization::PCLVisualizer::Ptr viewerPtr; //可视化 }; void pp_callback (const pcl::visualization::PointPickingEvent& event, void* args) { struct callback_args* data = (struct callback_args *)args; if (event.getPointIndex () == -1) return; PointT current_point; event.getPoint(current_point.x, current_point.y, current_point.z); data->clicked_points_3d->points.push_back(current_point); // Draw clicked points in red: pcl::visualization::PointCloudColorHandlerCustom<PointT> red (data->clicked_points_3d, 255, 0, 0); data->viewerPtr->removePointCloud("clicked_points"); data->viewerPtr->addPointCloud(data->clicked_points_3d, red, "clicked_points"); data->viewerPtr->setPointCloudRenderingProperties(pcl::visualization::PCL_VISUALIZER_POINT_SIZE, 10, "clicked_points"); std::cout << current_point.x << " " << current_point.y << " " << current_point.z << std::endl; } int main (int argc, char** argv) { if(pcl::console::find_switch (argc, argv, "--help") || pcl::console::find_switch (argc, argv, "-h")) return print_help(); // Algorithm parameters:算法参数 std::string svm_filename = "/home/salm/myopencv/yl_pcl/people/data/trainedLinearSVMForPeopleDetectionWithHOG.yaml"; float min_confidence = -1.5; float min_width = 0.1; float max_width = 8.0; float min_height = 1.3; float max_height = 2.3; float voxel_size = 0.06; //体素大小 float sampling_factor = 1; //采样因子 Eigen::Matrix3f rgb_intrinsics_matrix; rgb_intrinsics_matrix << 525, 0.0, 319.5, 0.0, 525, 239.5, 0.0, 0.0, 1.0; // Kinect RGB camera intrinsics相机的内参 // Read if some parameters are passed from command line: pcl::console::parse_argument (argc, argv, "--svm", svm_filename); pcl::console::parse_argument (argc, argv, "--conf", min_confidence); pcl::console::parse_argument (argc, argv, "--min_h", min_height); pcl::console::parse_argument (argc, argv, "--max_h", max_height); pcl::console::parse_argument (argc, argv, "--sample", sampling_factor); // Read Kinect live stream: 读取kienct的数据流 PointCloudT::Ptr cloud (new PointCloudT); bool new_cloud_available_flag = false; pcl::Grabber* interface = new pcl::OpenNIGrabber(); boost::function<void (const pcl::PointCloud<pcl::PointXYZRGBA>::ConstPtr&)> f = boost::bind (&cloud_cb_, _1, cloud, &new_cloud_available_flag); interface->registerCallback (f); interface->start (); // Wait for the first frame:等待第一帧数据流 while(!new_cloud_available_flag) boost::this_thread::sleep(boost::posix_time::milliseconds(1)); new_cloud_available_flag = false; cloud_mutex.lock (); // for not overwriting the point cloud锁住进程 // Display pointcloud:显示点云 pcl::visualization::PointCloudColorHandlerRGBField<PointT> rgb(cloud); viewer.addPointCloud<PointT> (cloud, rgb, "input_cloud"); viewer.setCameraPosition(0,0,-2,0,-1,0,0); //设置相机的位置 // Add point picking callback to viewer: struct callback_args cb_args; PointCloudT::Ptr clicked_points_3d (new PointCloudT); cb_args.clicked_points_3d = clicked_points_3d; cb_args.viewerPtr = pcl::visualization::PCLVisualizer::Ptr(&viewer); viewer.registerPointPickingCallback (pp_callback, (void*)&cb_args); std::cout << "Shift+click on three floor points, then press 'Q'..." << std::endl; // Spin until 'Q' is pressed: viewer.spin(); std::cout << "done." << std::endl; cloud_mutex.unlock (); // Ground plane estimation: Eigen::VectorXf ground_coeffs; ground_coeffs.resize(4); std::vector<int> clicked_points_indices; for (unsigned int i = 0; i < clicked_points_3d->points.size(); i++) clicked_points_indices.push_back(i); pcl::SampleConsensusModelPlane<PointT> model_plane(clicked_points_3d); model_plane.computeModelCoefficients(clicked_points_indices,ground_coeffs); std::cout << "Ground plane: " << ground_coeffs(0) << " " << ground_coeffs(1) << " " << ground_coeffs(2) << " " << ground_coeffs(3) << std::endl; // Initialize new viewer:可视化初始化 pcl::visualization::PCLVisualizer viewer("PCL Viewer"); // viewer initialization viewer.setCameraPosition(0,0,-2,0,-1,0,0); // Create classifier for people detection:创建分类器 pcl::people::PersonClassifier<pcl::RGB> person_classifier; person_classifier.loadSVMFromFile(svm_filename); // load trained SVM // People detection app initialization: 检测初始化 pcl::people::GroundBasedPeopleDetectionApp<PointT> people_detector; // people detection object people_detector.setVoxelSize(voxel_size); // set the voxel size people_detector.setIntrinsics(rgb_intrinsics_matrix); // set RGB camera intrinsic parameters people_detector.setClassifier(person_classifier); // set person classifier //people_detector.setPersonClusterLimits(min_height, max_height, min_width, max_width); people_detector.setSamplingFactor(sampling_factor); // set a downsampling factor to the point cloud (for increasing speed) // people_detector.setSensorPortraitOrientation(true); // set sensor orientation to vertical // For timing: static unsigned count = 0; static double last = pcl::getTime (); // Main loop: while (!viewer.wasStopped()) { if (new_cloud_available_flag && cloud_mutex.try_lock ()) // if a new cloud is available { new_cloud_available_flag = false; // Perform people detection on the new cloud:显示检测到的新点云 std::vector<pcl::people::PersonCluster<PointT> > clusters; // vector containing persons clusters people_detector.setInputCloud(cloud); people_detector.setGround(ground_coeffs); // set floor coefficients people_detector.compute(clusters); // perform people detection ground_coeffs = people_detector.getGround(); // get updated floor coefficients // Draw cloud and people bounding boxes in the viewer: viewer.removeAllPointClouds(); viewer.removeAllShapes(); pcl::visualization::PointCloudColorHandlerRGBField<PointT> rgb(cloud); viewer.addPointCloud<PointT> (cloud, rgb, "input_cloud"); unsigned int k = 0; for(std::vector<pcl::people::PersonCluster<PointT> >::iterator it = clusters.begin(); it != clusters.end(); ++it) { if(it->getPersonConfidence() > min_confidence) // draw only people with confidence above a threshold { // draw theoretical person bounding box in the PCL viewer: it->drawTBoundingBox(viewer, k); k++; } } std::cout << k << " people found" << std::endl; viewer.spinOnce(); // Display average framerate: if (++count == 30) { double now = pcl::getTime (); std::cout << "Average framerate: " << double(count)/double(now - last) << " Hz" << std::endl; count = 0; last = now; } cloud_mutex.unlock (); } }

但是我们知道这需要其他的头文件配合。那么其他程序就不再一一贴出来。PCL源码里也有,但是如果你直接编译是编译不过去的,所以需要我们自己写一个CMakeLists.txt文件来编译。

暂时就到这里了。。。。。。

微信公众号号可扫描二维码一起共同学习交流